精简模型,提升效能:线性回归中的特征选择技巧

deephub 2024-08-17 17:01:02 阅读 83

在本文中,我们将探讨各种特征选择方法和技术,用以在保持模型评分可接受的情况下减少特征数量。通过减少噪声和冗余信息,模型可以更快地处理,并减少复杂性。

我们将使用所有特征作为基础模型。然后将执行各种特征选择技术,以确定保留和删除的最佳特征,同时不显著牺牲评分(R2 分数)。使用的方法包括:

相关性矩阵检查方差膨胀因子(VIF)Lasso作为特征选择方法Select K-Best(f_regression 和 mutual_info_regression)递归特征消除(RFE)顺序前向/后向特征选择

数据集

我们将从汽车数据集开始,该数据集包含七个特征,并将“mpg”(每加仑行驶英里数)列设置为我们的目标变量。

<code> import pandas as pd

pd.set_option('display.max_colwidth', None) # Show full content of each column

url = "https://archive.ics.uci.edu/ml/machine-learning-databases/auto-mpg/auto-mpg.data"

column_names = ["mpg", "cylinders", "displacement", "horsepower", "weight", "acceleration", "model year", "origin", "car name"]

df = pd.read_csv(url, names=column_names, delim_whitespace=True, na_values='?')code>

# drop null

df = df.dropna()

df = df.drop(columns='car name')code>

print(df.shape)

df.head()

数据集还需要做一些预处理,我们先处理一下异常值

<code> # Function to count outliers in each column

def count_outliers(df):

outlier_counts = {}

for col in df.columns:

if df[col].dtype != 'object': # Exclude non-numeric columns

Q1 = df[col].quantile(0.25)

Q3 = df[col].quantile(0.75)

IQR = Q3 - Q1

lower_bound = Q1 - 1.5 * IQR

upper_bound = Q3 + 1.5 * IQR

lower_bound_outliers = df[df[col] < lower_bound]

upper_bound_outliers = df[df[col] > upper_bound]

total_outliers = len(lower_bound_outliers) + len(upper_bound_outliers)

outlier_counts[col] = total_outliers

return outlier_counts

count_outliers(df)

结果如下:

{'mpg': 0,

'cylinders': 0,

'displacement': 0,

'horsepower': 10,

'weight': 0,

'acceleration': 11,

'model year': 0,

'origin': 0}

“horsepower”和“acceleration”有几个异常值。

import numpy as np

import warnings

warnings.filterwarnings("ignore")

def replace_outliers_with_mean(df):

for col in df.columns:

if df[col].dtype != 'object': # Exclude non-numeric columns

Q1 = df[col].quantile(0.25)

Q3 = df[col].quantile(0.75)

IQR = Q3 - Q1

lower_bound = Q1 - 1.5 * IQR

upper_bound = Q3 + 1.5 * IQR

# Identify outliers

lower_bound_outliers = df[col] < lower_bound

upper_bound_outliers = df[col] > upper_bound

# Replace outliers with the column mean

col_mean = df[col].mean()

df[col][lower_bound_outliers | upper_bound_outliers] = col_mean

return df

df = replace_outliers_with_mean(df)

count_outliers(df) # run multiple times according to desired result (zero outliers)

这样异常值就没有了

{'mpg': 0,

'cylinders': 0,

'displacement': 0,

'horsepower': 0,

'weight': 0,

'acceleration': 0,

'model year': 0,

'origin': 0}

现在数据集已经清理完毕,可以特征选择方法了。

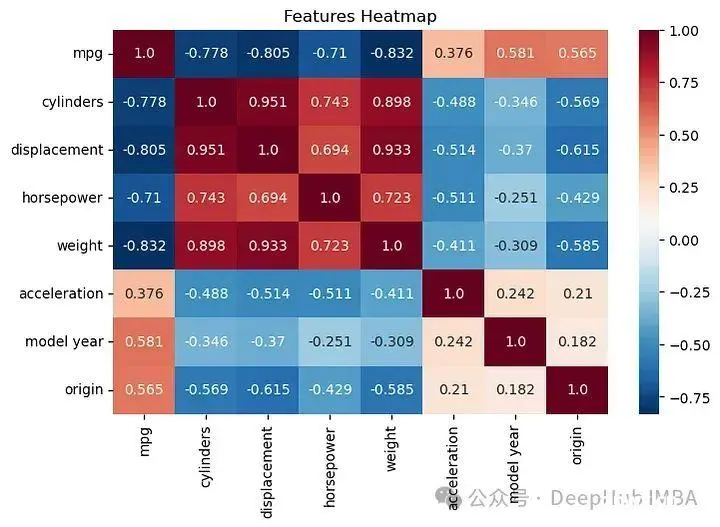

检验相关矩阵

通过查看相关矩阵,我们可以明确哪些特征与目标变量(如每加仑行驶英里数)有强相关性,这有助于预测。同时,这也帮助我们识别那些相互之间关联度高的特征,可能需要从模型中移除一些以避免多重共线性,从而改善模型的性能和准确性。

# Correlation Matrix

import matplotlib.pyplot as plt

import seaborn as sns

ax2= plt.figure(figsize=(8,5))

ax2=sns.heatmap(df.corr(), annot=True, fmt='.3', cmap='RdBu_r')code>

plt.title('Features Heatmap')

ax2=plt.show()

相关性矩阵表明,cylinders, displacement, horsepower, weight与我们的目标变量(MPG)呈强烈负相关,而车型年份和产地则显示出轻微的正相关。

这有助于我们识别那些对目标变量影响较大的特征,从而在特征选择时做出更明智的决策。

我们先做一个全特征的基础模型:

<code> from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.linear_model import LinearRegression

y = df['mpg']

# Select predictor variables

X_base = df.drop(columns=['mpg'])

# Linear regression function

def train_and_evaluate_linear_regression(X, y, test_size=0.3, random_state=42):

# Split the dataset into training and testing sets

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=test_size, random_state=random_state)

# Normalize the features

scaler = StandardScaler()

X_train = scaler.fit_transform(X_train)

X_test = scaler.transform(X_test)

# Initialize and fit the Linear Regression model

lr = LinearRegression()

lr.fit(X_train, y_train)

# Evaluate the model

train_score = lr.score(X_train, y_train)

test_score = lr.score(X_test, y_test)

return train_score, test_score

训练:

train_and_evaluate_linear_regression(X_base,y)

结果如下:

(0.8451296595927265, 0.8233345996149848)

这个基础模型,包含所有七个选定的特征,输出的训练分数为0.845,测试分数为0.823。现在,让我们看看是否能在保持或甚至提高这个分数的同时,减少特征的数量。

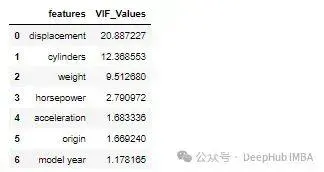

方差膨胀因子(VIF)

VIF 表示特定特征与数据集中其他特征的相关程度。高 VIF 值表明该特征具有高度的多重共线性,可能是冗余的。通过分析 VIF,我们可以识别并考虑从模型中移除那些可能对模型预测能力影响不大的冗余特征,从而优化模型的性能和准确性。

from statsmodels.stats.outliers_influence import variance_inflation_factor

# VIF Score fucntion

def standardize_and_calculate_vif(df):

# Standardize the features

scaler = StandardScaler()

df_standardized = pd.DataFrame(scaler.fit_transform(df), columns=df.columns)

# Calculate VIF

vif = pd.DataFrame()

vif['features'] = df_standardized.columns

vif['VIF_Values'] = [variance_inflation_factor(df_standardized.values, i) for i in range(df_standardized.shape[1])]

# Sort by VIF_Values in descending order

vif = vif.sort_values(by='VIF_Values', ascending=False).reset_index(drop=True)code>

return vif

standardize_and_calculate_vif(df.drop(columns='mpg'))code>

具有高 VIF 值的特征通常是改善模型准确性的候选特征,可考虑移除。通过减少这些特征,可以降低模型的复杂性,提高其泛化能力,在不牺牲模型性能的前提下,使模型更加简洁有效。

<code> # seleced Features according to VIF values

X_vif = df[[

'model year',

'origin',

'acceleration',

'horsepower',

'weight',

# 'cylinders', # removed

# 'displacement', # removed

]]

继续调用上面我们写好的训练函数

train_and_evaluate_linear_regression(X_vif,y)

结果如下:

(0.8431221864763683, 0.8256739410002708)

可以看到训练集分数差别不到,而测试集则有一些增长,说明我们去掉特征后模型的鲁棒性(泛化)得到了提高

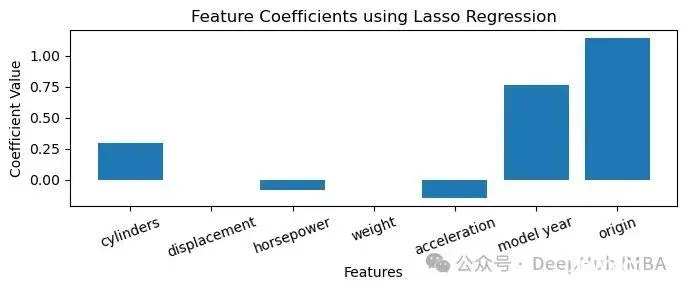

Lasso作为特征选择

Lasso回归通常用于正则化,以防止过拟合,这种情况下的模型可能在训练数据上得分很高,但在未见过的测试数据上表现不佳。Lasso还可以作为一种特征选择技术,通过将系数缩减至零,帮助识别最重要的预测变量。这种方法不仅能有效减少模型中的特征数量,还能帮助我们集中关注那些对目标变量有实质性影响的特征。

from sklearn.linear_model import Lasso

from sklearn.metrics import r2_score

import matplotlib.pyplot as plt

# codes Plot the coefficients

y = df['mpg']

# Select predictor variables

X = df.drop(columns=['mpg'])

# Split the dataset into training and testing sets

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=42)

# Initialize and fit the Lasso model

lasso = Lasso(alpha=0.1)

lasso.fit(X_train, y_train)

# Get the coefficients of the features

coefficients = lasso.coef_

# Plot the coefficients

plt.figure(figsize=(7, 3))

plt.bar(X.columns, coefficients)

plt.xlabel('Features')

plt.ylabel('Coefficient Value')

plt.title('Feature Coefficients using Lasso Regression')

plt.xticks(rotation=20)

plt.tight_layout()

plt.show()

我们把最小的weight和displacement去除

<code> # seleced Features according to lasso coeffiennt value

X_lasso = df[[

'model year',

'origin',

'acceleration',

'horsepower',

# 'weight', # removed

'cylinders',

# 'displacement', # removed

]]

训练

train_and_evaluate_linear_regression(X_lasso,y)

结果如下:

(0.7881176469410339, 0.7675541084603061)

可以看到效果并不是很理想,这是因为Lasso没有考虑到多重共线性的问题。

Select K-Best

Select K-Best有两种方法

1、f_regression

使用f_regression进行特征选择时,方法会计算每个特征与目标变量之间的相关性程度,并通过F统计量来衡量这种关联的强度。这种方法特别适合于处理连续的特征和目标变量,能够有效地识别出对预测目标变量最有用的特征。选择F统计值最高的K个特征,可以帮助构建一个既简洁又有效的模型。

from sklearn.feature_selection import SelectKBest, f_regression

# all features

X = df[[

'model year',

'origin',

'acceleration',

'horsepower',

'weight',

'cylinders',

'displacement',

]]

# K best scoring function

def K_best_score_list(score_func):

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=42)

# normalize

scaler = StandardScaler()

X_train = scaler.fit_transform(X_train)

X_test = scaler.transform(X_test)

selector = SelectKBest(score_func, k='all')code>

x_train_kbest = selector.fit_transform(X_train, y_train)

x_test_kbest = selector.transform(X_test)

feature_scores = pd.DataFrame({'Feature': X.columns,

'Score': selector.scores_,

'p-Value': selector.pvalues_})

feature_scores = feature_scores.sort_values(by='Score', ascending=False)code>

return feature_scores

训练:

K_best_score_list(f_regression)

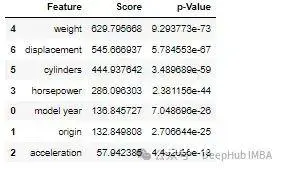

得分高表明该特征与目标变量高度相关

<code> # function to evaluate N number of features on R2 score. The features will be selected according to F-score

def evaluate_features(X, y, score_func):

# Split the data into training and testing sets

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=42)

# normalize

scaler = StandardScaler()

X_train = scaler.fit_transform(X_train)

X_test = scaler.transform(X_test)

f_regression_list = []

selected_features_list = []

for k in range(1, len(X.columns) + 1):

selector = SelectKBest(score_func, k=k)

x_train_kbest = selector.fit_transform(X_train, y_train)

x_test_kbest = selector.transform(X_test)

lr = LinearRegression()

lr.fit(x_train_kbest, y_train)

y_preds_kbest = lr.predict(x_test_kbest)

# Calculate the r2_score as an example of performance evaluation

r2_score_kbest = lr.score(x_test_kbest, y_test)

f_regression_list.append(r2_score_kbest)

# Get selected feature names

selected_feature_mask = selector.get_support()

selected_features = X.columns[selected_feature_mask].tolist()

selected_features_list.append(selected_features)

x = np.arange(1, len(X.columns) + 1)

result_df = pd.DataFrame({'k': x, 'r2_score_test_data': f_regression_list, 'selected_features': selected_features_list})

return result_df

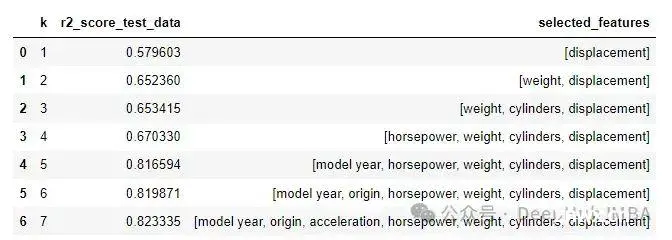

评估f_regression特征在R2上的得分

evaluate_features(X, y, f_regression)

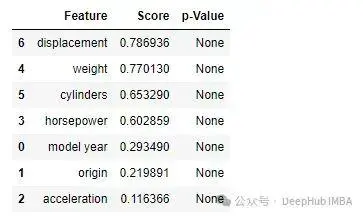

2、mutual_info_regression

使用互信息回归(mutual_info_regression)进行特征选择时,该方法会评估每个特征与目标变量之间的信息共享量。互信息得分高意味着特征与目标变量之间的关系更为密切,这种特征对于预测目标变量非常重要。通过选择互信息得分最高的K个特征,我们可以确保模型包含最有影响力的特征,从而提高模型的预测能力和准确性。

<code> from sklearn.feature_selection import mutual_info_regression

K_best_score_list(mutual_info_regression)

<code> evaluate_features(X,y, mutual_info_regression)

递归特征消除(RFE)

递归特征消除(RFE)通过迭代方式从模型中去除较不重要的特征,评估这些特征对模型性能的影响。它通常依赖于模型系数或特征重要性等指标来决定每次迭代中应去除哪些特征。这一迭代过程持续进行,直到剩下所需数量的特征,确保最终模型中仅保留最相关的预测因子。这种方法有助于优化模型的结构,确保模型的效率和准确性。

<code> from sklearn.feature_selection import RFE

# all features

X = df[[

'cylinders',

'weight',

'model year',

'displacement',

'acceleration',

'horsepower',

'origin'

]]

# function evaluate_rfe_features(X, y)

def evaluate_rfe_features(X, y):

# Split the data into training and testing sets

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=42)

# normalize

scaler = StandardScaler()

X_train = scaler.fit_transform(X_train)

X_test = scaler.transform(X_test)

r2_score_list = []

selected_features_list = []

for k in range(1, len(X.columns) + 1):

lr = LinearRegression()

rfe = RFE(estimator=lr, n_features_to_select=k)

x_train_rfe = rfe.fit_transform(X_train, y_train)

x_test_rfe = rfe.transform(X_test)

lr.fit(x_train_rfe, y_train)

# y_preds_rfe = lr.predict(x_test_rfe)

# Calculate the r2_score as an example of performance evaluation

r2_score_rfe = lr.score(x_test_rfe, y_test)

r2_score_list.append(r2_score_rfe)

# Get selected feature names

selected_feature_mask = rfe.get_support()

selected_features = X.columns[selected_feature_mask].tolist()

selected_features_list.append(selected_features)

x = np.arange(1, len(X.columns) + 1)

result_df = pd.DataFrame({'k': x, 'r2_score': r2_score_list, 'selected_features': selected_features_list})

return result_df

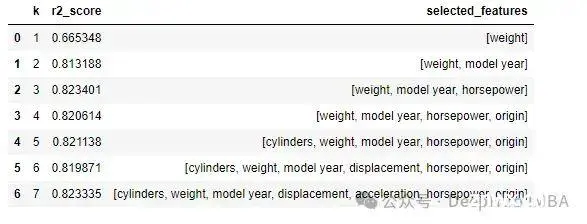

测试:

evaluate_rfe_features(X, y)

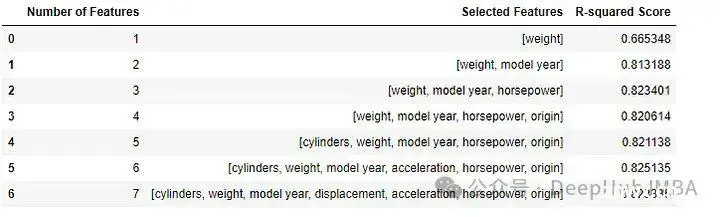

顺序前向和后向选择

顺序前向选择(SFS):从一个空的特征集开始,逐步一次添加一个特征到模型中,每一步都选择能最大提高模型性能的特征。顺序后向选择(SBS):从包含所有特征的模型开始,每一步去除一个特征,选择其移除对模型性能影响最小的,直到满足停止标准为止。

这两种方法都是通过迭代的方式精细调整特征集,以达到最佳的模型性能。顺序前向选择适用于从少量特征开始逐步构建模型,而顺序后向选择则适用于从一个全特征模型开始逐步简化。这两种方法都能有效地帮助确定哪些特征对预测目标变量最为重要,从而使得模型既精简又有效。

<code> from mlxtend.feature_selection import SequentialFeatureSelector as SFS

from sklearn.metrics import r2_score

def feature_selection_with_sfs_sbs(X, y, test_size=0.3, random_state=42, forward=True, floating=False, scoring='r2', cv=5):code>

# List of feature names

feature_names = X.columns.tolist()

# Splitting the dataset into training and testing sets

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=test_size, random_state=random_state)

# Standardize the data (recommended for models like linear regression)

scaler = StandardScaler()

X_train_scaled = scaler.fit_transform(X_train)

X_test_scaled = scaler.transform(X_test)

# Initialize lists to store results

selected_features = []

r2_scores = []

# Iterate over different numbers of features

for k in range(1, X.shape[1] + 1): # Iterate from 1 to total number of features

# Initialize the Sequential Feature Selector

sfs = SFS(LinearRegression(),

k_features=k,

forward=forward,

floating=floating,

scoring=scoring, # Use specified scoring for evaluation

cv=cv)

# Fit the Sequential Feature Selector to the training data

sfs.fit(X_train_scaled, y_train)

# Transform the data to only include the selected features

X_train_selected = sfs.transform(X_train_scaled)

X_test_selected = sfs.transform(X_test_scaled)

# Train a new model using only the selected features

model = LinearRegression()

model.fit(X_train_selected, y_train)

# Evaluate the model on the test set using R-squared score

y_pred = model.predict(X_test_selected)

r2 = r2_score(y_test, y_pred)

# Store results

selected_features.append([feature_names[i] for i in sfs.k_feature_idx_])

r2_scores.append(r2)

# Create a DataFrame to store the results

results_df = pd.DataFrame({

'Number of Features': list(range(1, X.shape[1] + 1)),

'Selected Features': selected_features,

'R-squared Score': r2_scores

})

return results_df

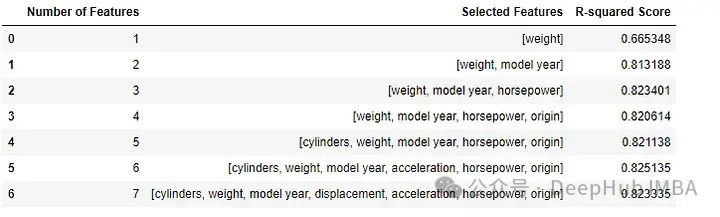

顺序前向选择

feature_selection_with_sfs_sbs(X,y,

forward = True,

scoring = 'r2',

cv = 0

)

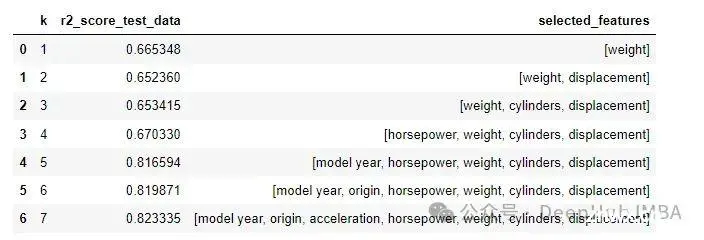

顺序后向选择

<code> feature_selection_with_sfs_sbs(X,y,

forward = False,

scoring = 'r2',

cv = 0

)

通过前向和后向序列特征选择,我们确定了最优特征。

总结

递归特征消除(RFE)、顺序前向选择(SFFS)、和顺序后向选择(SBFS)都表明,‘weight’、‘model year’和‘horsepower’是最重要的特征。仅使用这三个特征,我们就能获得可靠的 R² 分数0.823,与使用七个特征的基础模型相比,其 R² 分数也是0.823。(这些 R² 分数是从未在训练期间使用过的测试数据中获得的。)

这表明通过精确的特征选择,我们能够简化模型而不损失性能,从而提高模型的效率和可解释性。通过减少特征的数量,我们还能减少模型训练和预测所需的计算资源,从而在保持预测质量的同时,提高计算效率。

https://avoid.overfit.cn/post/193a9516b36c48a7987766746ef20e8f

作者:kaiku

声明

本文内容仅代表作者观点,或转载于其他网站,本站不以此文作为商业用途

如有涉及侵权,请联系本站进行删除

转载本站原创文章,请注明来源及作者。