pytorch-scheduler(调度器)

云朵不吃雨 2024-09-18 14:01:02 阅读 99

scheduler简介

schedueler类型

scheduler简介余弦拟退火(CosineAnnealingLR)LambdaLRMultiplicativeLRStepLRMultiStepLRConstantLRLinearLRExponentialLRPolynomialLROneCycleLRCosineAnnealingWarmRestartsChainedSchedulerSequentialLRReduceLROnPlateau

scheduler(调度器)是一种用于调整优化算法中学习率的机制。学习率是控制模型参数更新幅度的关键超参数,而调度器根据预定的策略在训练过程中动态地调整学习率。

优化器负责根据损失函数的梯度更新模型的参数,而调度器则负责调整优化过程中使用的特定参数,通常是学习率。调度器通过调整学习率帮助优化器更有效地搜索参数空间,避免陷入局部最小值,并加快收敛速度。

调度器允许实现复杂的训练策略,学习率预热、周期性调整或突然降低学习率,这些策略对于优化器的性能至关重要。

学习率绘图函数

我们设定一简单的模型,得带100 epoch,绘制迭代过程中loss的变化

<code>import os

import torch

from torch.optim import lr_scheduler

import matplotlib.pyplot as plt

# 模拟训练过程,每循环一次更新一次学习率

def get_lr_scheduler(optim, scheduler, total_step):

'''

get lr values

'''

lrs = []

for step in range(total_step):

lr_current = optim.param_groups[0]['lr']

lrs.append(lr_current)

if scheduler is not None:

scheduler.step()

return lrs

# 将损失函数替换为学习率,模拟根据损失函数进行自适调整的学习率变化

def get_lr_scheduler1(optim, scheduler, total_step):

'''

get lr values

'''

lrs = []

for step in range(total_step):

lr_current = optim.param_groups[0]['lr']

lrs.append(lr_current)

if scheduler is not None:

scheduler.step(lr_current)

return lrs

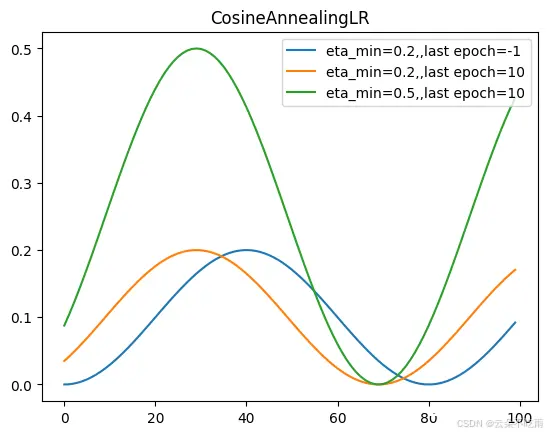

余弦拟退火(CosineAnnealingLR)

torch.optim.lr_scheduler.CosineAnnealingLR(optimizer, T_max, eta_min=0, last_epoch=-1, verbose=‘deprecated’)

cosine形式的退火学习率变化机制,顾名思义,学习率变化曲线是cos形式的,定义一个正弦曲线需要四个参数,周期、最大最小值、相位。其中周期=2T_max,eta_min周期函数的峰值,last_epoch表示加载模型的迭代步数,定义cos曲线的初始位置,当它为-1时,参数不生效,初始相位就是0,否则就是last_epochT_max/pi。

当 last_epoch=-1 时,将初始学习率设置为学习率。请注意,由于计划是递归定义的,学习率可以同时被此调度程序之外的其他操作者修改。如果学习率仅由这个调度程序设置,那么每个步骤的学习率变为:

<code>def plot_cosine_aneal():

plt.clf()

optim = torch.optim.Adam([{ 'params': model.parameters(),

'initial_lr': initial_lr}], lr=initial_lr)

scheduler = lr_scheduler.CosineAnnealingLR(

optim, T_max=40, eta_min=0.2)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs, label='eta_min=0.2,,last epoch=-1')code>

# if not re defined, the init lr will be lrs[-1]

optim = torch.optim.Adam([{ 'params': model.parameters(),

'initial_lr': initial_lr}], lr=initial_lr)

scheduler = lr_scheduler.CosineAnnealingLR(

optim, T_max=40, eta_min=0.2, last_epoch=10)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs, label='eta_min=0.2,,last epoch=10')code>

# eta_min

scheduler = lr_scheduler.CosineAnnealingLR(

optim, T_max=40, eta_min=0.5, last_epoch=10)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs, label='eta_min=0.5,,last epoch=10')code>

plt.title('CosineAnnealingLR')

plt.legend()

plt.show()

plot_cosine_aneal()

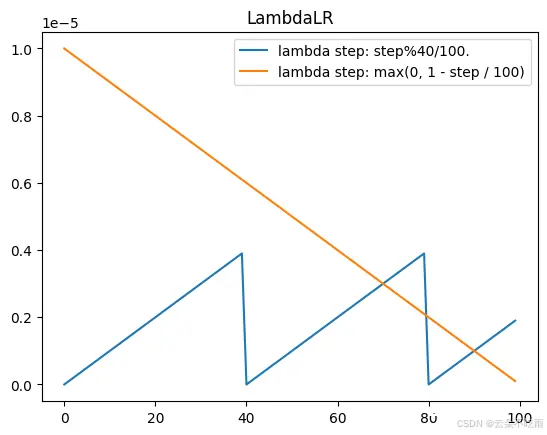

LambdaLR

torch.optim.lr_scheduler.LambdaLR(optimizer, lr_lambda = function, last_epoch=- 1, verbose=False)

LambdaLR 可以根据用户定义的 lambda 函数或 lambda 函数列表来调整学习率。当您想要实现标准调度器未提供的自定义学习率计划时,这种调度器特别有用。

Lambda 函数是使用 Python 中的 lambda 关键字定义的小型匿名函数。

Lambda 函数应该接受一个参数(周期索引)并返回一个乘数,学习率将乘以这个乘数。

<code>def plot_lambdalr():

plt.clf()

# Lambda1

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.LambdaLR(

optim, lr_lambda=lambda step: step%40/100.)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label='lambda step: step%40/100.')code>

#Lambda2

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.LambdaLR(

optim, lr_lambda=lambda step: max(0, 1 - step / 100))

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label='lambda step: max(0, 1 - step / 100)')code>

plt.title('LambdaLR')

plt.legend()

plt.show()

plot_lambdalr()

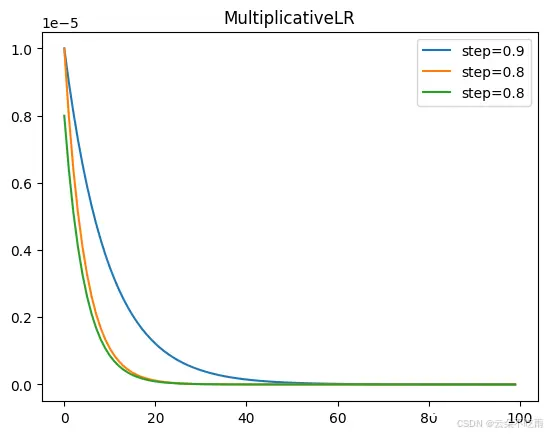

MultiplicativeLR

torch.optim.lr_scheduler.MultiplicativeLR(optimizer, lr_lambda, last_epoch=-1, verbose=‘deprecated’)

学习率在达到特定的epoch时降低,将每个参数组的学习率乘以指定函数给出的因子,通常用于在训练的不同阶段使用不同的学习率。当 last_epoch=-1 时,将初始学习率设置为学习率。

<code>def plot_multiplicativelr():

plt.clf()

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.MultiplicativeLR(

optim, lr_lambda=lambda step: 0.9)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'step=0.9')

# plt.title('MultiplicativeLR')

# plt.show()

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.MultiplicativeLR(

optim, lr_lambda=lambda step: 0.8)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'step=0.8')

#

optim = torch.optim.Adam([{ 'params': model.parameters(),

'initial_lr': initial_lr}], lr=initial_lr)

scheduler = lr_scheduler.MultiplicativeLR(

optim, lr_lambda=lambda step: 0.8,last_epoch=20)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'step=0.8,last_epoch=20')

plt.title('MultiplicativeLR')

plt.legend()

plt.show()

plot_multiplicativelr()

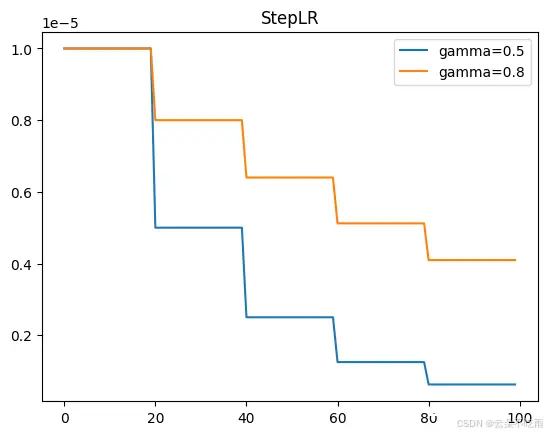

StepLR

torch.optim.lr_scheduler.StepLR(optimizer, step_size, gamma=0.1, last_epoch=-1, verbose=‘deprecated’)

学习率在预定的周期(epoch)后按固定比例衰减,每 step_size = epoch 个周期将每个参数组的学习率按 gamma 的因子衰减。请注意,这种衰减可能与此调度程序之外对学习率的其他变化同时发生。当 last_epoch=-1 时,将初始学习率设置为学习率。

<code>def plot_steplr():

plt.clf()

#gamma=0.5

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.StepLR(

optim, step_size=20, gamma=0.5)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'gamma=0.5')

#gamma=0.8

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.StepLR(

optim, step_size=20, gamma=0.8)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'gamma=0.8')

plt.title('StepLR')

plt.legend()

plt.show()

plot_steplr()

MultiStepLR

torch.optim.lr_scheduler.MultiStepLR(optimizer, milestones, gamma=0.1, last_epoch=-1, verbose=‘deprecated’)

每当达到里程碑中的某个周期数时,将每个参数组的学习率按 gamma 的因子衰减。请注意,这种衰减可能与此调度程序之外对学习率的其他变化同时发生。当 last_epoch=-1 时,将初始学习率设置为学习率。

<code>def plot_multisteplr():

plt.clf()

#example1

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.MultiStepLR(

optim, milestones=[50, 80], gamma=0.5)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'milestones=[50, 80], gamma=0.5')

#example2

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.MultiStepLR(

optim, milestones=[50, 70,90], gamma=0.5)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'milestones=[50, 70,90], gamma=0.5')

#example3

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.MultiStepLR(

optim, milestones=[50, 70 ,90], gamma=0.8)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'milestones=[50, 70,90], gamma=0.8')

plt.title('MultiStepLR')

plt.legend()

plt.show()

plot_multisteplr()

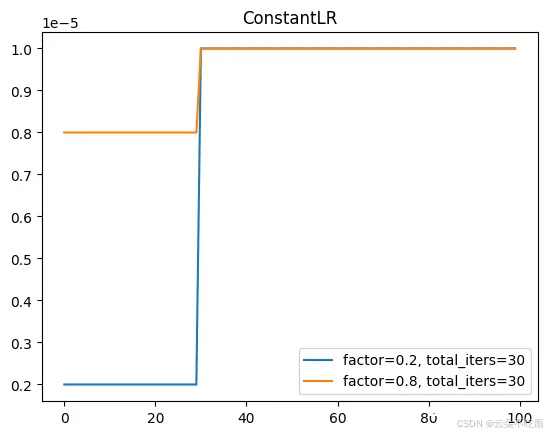

ConstantLR

torch.optim.lr_scheduler.ConstantLR(optimizer, factor=0.3333333333333333, total_iters=5, last_epoch=-1, verbose=‘deprecated’)

将每个参数组的学习率乘以一个小的常数因子,直到周期数达到预定义的里程碑:total_iters。请注意,这种小常数因子的乘法可能与此调度程序之外对学习率的其他变化同时发生。当 last_epoch=-1 时,将初始学习率设置为学习率。

<code>def plot_constantlr():

plt.clf()

##

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.ConstantLR(

optim, factor=0.2, total_iters=30)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'factor=0.2, total_iters=30')

##

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.ConstantLR(

optim, factor=0.8, total_iters=30)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'factor=0.8, total_iters=30')

plt.title('ConstantLR')

plt.legend()

plt.show()

plot_constantlr()

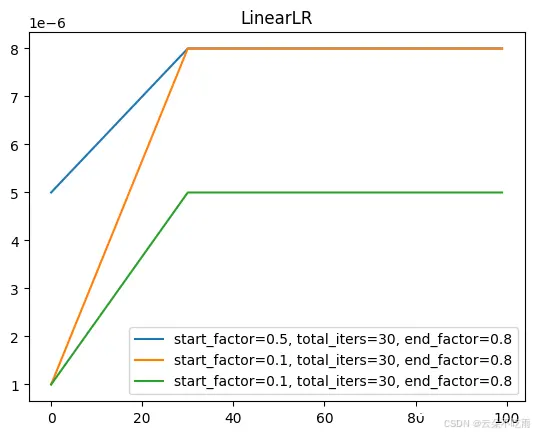

LinearLR

torch.optim.lr_scheduler.LinearLR(optimizer, start_factor=0.3333333333333333, end_factor=1.0, total_iters=5, last_epoch=-1, verbose=‘deprecated’)

将每个参数组的学习率通过线性变化的小乘法因子进行衰减,直到周期数达到预定义的里程碑:total_iters。请注意,这种衰减可能与此调度程序之外对学习率的其他变化同时发生。当 last_epoch=-1 时,将初始学习率设置为学习率。

<code>def plot_linearlr():

plt.clf()

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.LinearLR(

optim, start_factor=0.5, total_iters=30, end_factor=0.8)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'start_factor=0.5, total_iters=30, end_factor=0.8')

##

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.LinearLR(

optim, start_factor=0.1, total_iters=30, end_factor=0.8)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'start_factor=0.1, total_iters=30, end_factor=0.8')

##

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.LinearLR(

optim, start_factor=0.1, total_iters=30, end_factor=0.5)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'start_factor=0.1, total_iters=30, end_factor=0.8')

plt.title('LinearLR')

plt.legend()

plt.show()

plot_linearlr()

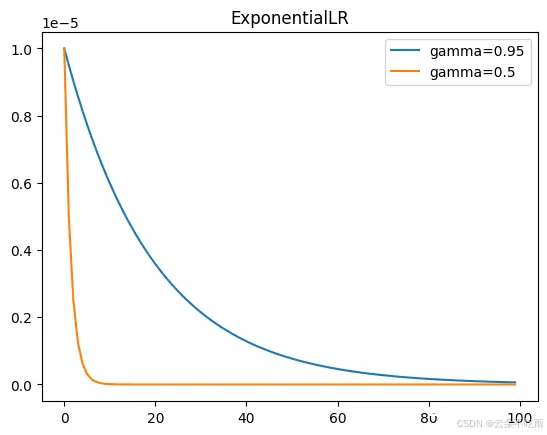

ExponentialLR

torch.optim.lr_scheduler.ExponentialLR(optimizer, gamma, last_epoch=-1, verbose=‘deprecated’)

每个周期将每个参数组的学习率按 gamma 的因子衰减。当 last_epoch=-1 时,将初始学习率设置为学习率。

<code>def plot_exponential():

plt.clf()

#

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.ExponentialLR(

optim, gamma=0.95)

lrs = get_lr_scheduler(optim, scheduler, 100)

plt.plot(lrs,label = 'gamma=0.95')

#

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.ExponentialLR(

optim, gamma=0.5)

lrs = get_lr_scheduler(optim, scheduler, 100)

plt.plot(lrs,label = 'gamma=0.5')

plt.title('ExponentialLR')

plt.legend()

plt.show()

plot_exponential()

PolynomialLR

torch.optim.lr_scheduler.PolynomialLR(optimizer, total_iters=5, power=1.0, last_epoch=-1, verbose=‘deprecated’)

使用给定的总迭代次数 total_iters,通过多项式函数对每个参数组的学习率进行衰减。当 last_epoch=-1 时,将初始学习率设置为学习率。

<code>def plot_PolynomialLR():

plt.clf()

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.PolynomialLR(optim, total_iters=100, power=0.09, last_epoch=-1, verbose='deprecated')code>

lrs = get_lr_scheduler(optim, scheduler, 100)

plt.plot(lrs,label = 'total_iters=100, power=0.09')

##

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.PolynomialLR(optim, total_iters=50, power=0.9, last_epoch=-1, verbose='deprecated')code>

lrs = get_lr_scheduler(optim, scheduler, 100)

plt.plot(lrs,label = 'total_iters=50, power=0.09')

##

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.PolynomialLR(optim, total_iters=50, power=0.1, last_epoch=-1, verbose='deprecated')code>

lrs = get_lr_scheduler(optim, scheduler, 100)

plt.plot(lrs,label = 'total_iters=50, power=0.1')

plt.title('plot_PolynomialLR')

plt.legend()

plt.show()

plot_PolynomialLR()

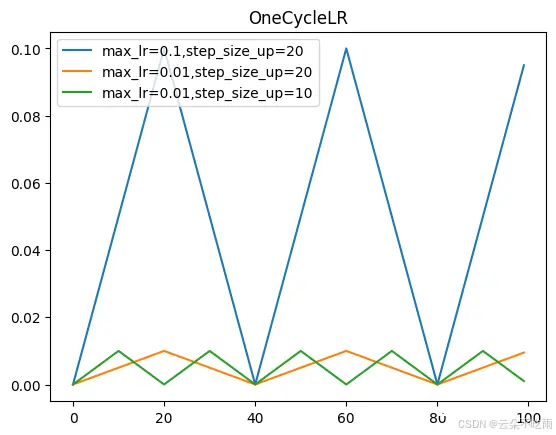

torch.optim.lr_scheduler.CyclicLR(optimizer, base_lr, max_lr, step_size_up=2000, step_size_down=None, mode=‘triangular’, gamma=1.0, scale_fn=None, scale_mode=‘cycle’, cycle_momentum=True, base_momentum=0.8, max_momentum=0.9, last_epoch=-1, verbose=‘deprecated’)

根据循环学习率策略(CLR)设置每个参数组的学习率。该策略以恒定频率在两个边界之间循环变化学习率,如在论文《Cyclical Learning Rates for Training Neural Networks》中详细描述的那样。两个边界之间的距离可以按每次迭代或每个周期进行缩放。

循环学习率策略在每个批次之后改变学习率。应在使用一个批次进行训练后调用 step() 函数。

<code>def plot_CyclicLR():

plt.clf()

#

optim = torch.optim.SGD(model.parameters(), lr=initial_lr, momentum=0.9)

scheduler = lr_scheduler.CyclicLR(

optim, base_lr = initial_lr,max_lr=0.1,step_size_up=20, )

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'max_lr=0.1,step_size_up=20')

#

optim = torch.optim.SGD(model.parameters(), lr=initial_lr, momentum=0.9)

scheduler = lr_scheduler.CyclicLR(

optim, base_lr = initial_lr,max_lr=0.01,step_size_up=20, )

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'max_lr=0.01,step_size_up=20')

#

optim = torch.optim.SGD(model.parameters(), lr=initial_lr, momentum=0.9)

scheduler = lr_scheduler.CyclicLR(

optim, base_lr = initial_lr,max_lr=0.01,step_size_up=10, )

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'max_lr=0.01,step_size_up=10')

plt.title('OneCycleLR')

plt.legend()

plt.show()

plot_CyclicLR()

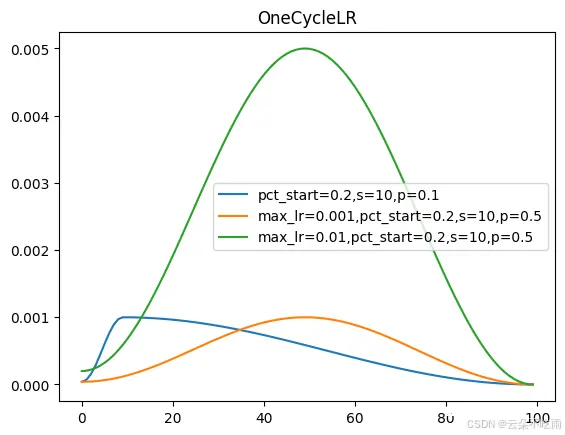

OneCycleLR

torch.optim.lr_scheduler.OneCycleLR(optimizer, max_lr, total_steps=None, epochs=None, steps_per_epoch=None, pct_start=0.3, anneal_strategy=‘cos’, cycle_momentum=True, base_momentum=0.85, max_momentum=0.95, div_factor=25.0, final_div_factor=10000.0, three_phase=False, last_epoch=-1, verbose=‘deprecated’)

<code>def plot_onecyclelr():

plt.clf()

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.OneCycleLR(

optim, max_lr=0.001, epochs=10, steps_per_epoch=10, pct_start=0.1)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'pct_start=0.2,s=10,p=0.1')

##

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.OneCycleLR(

optim, max_lr=0.001, epochs=10, steps_per_epoch=10, pct_start=0.5)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'max_lr=0.001,pct_start=0.2,s=10,p=0.5')

##

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.OneCycleLR(

optim, max_lr=0.005, epochs=10, steps_per_epoch=10, pct_start=0.5)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'max_lr=0.01,pct_start=0.2,s=10,p=0.5')

plt.title('OneCycleLR')

plt.legend()

plt.show()

plot_onecyclelr()

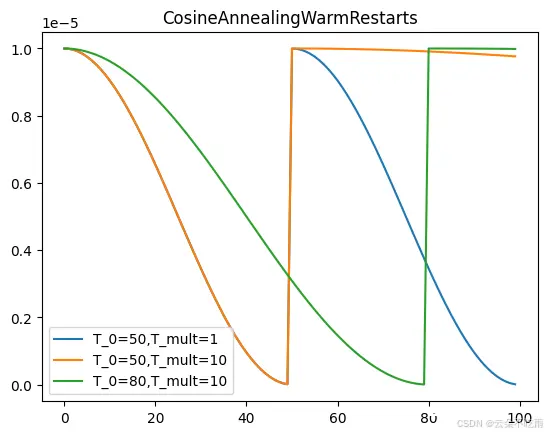

CosineAnnealingWarmRestarts

torch.optim.lr_scheduler.CosineAnnealingWarmRestarts(optimizer, T_0, T_mult=1, eta_min=0, last_epoch=-1, verbose=‘deprecated’)

<code>def CosineAnnealingWarmRestarts():

plt.clf()

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.CosineAnnealingWarmRestarts(

optim, eta_min=0., T_0=50, T_mult=1)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'T_0=50,T_mult=1')

#

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.CosineAnnealingWarmRestarts(

optim, eta_min=0., T_0=50, T_mult=10)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'T_0=50,T_mult=10')

#

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.CosineAnnealingWarmRestarts(

optim, eta_min=0., T_0=80, T_mult=10)

lrs = get_lr_scheduler(optim, scheduler, total_step)

plt.plot(lrs,label = 'T_0=80,T_mult=10')

plt.title('CosineAnnealingWarmRestarts')

plt.legend()

plt.show()

CosineAnnealingWarmRestarts()

ChainedScheduler

torch.optim.lr_scheduler.ChainedScheduler(schedulers)

将学习率调度器列表串联起来。它接受一列表可串联的学习率调度器,并仅通过一次调用连续执行它们各自的 step() 函数。

<code>def plot_ChainedScheduler():

plt.clf()

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

print(initial_lr)

scheduler1 = lr_scheduler.LinearLR(

optim, start_factor=0.2, total_iters=5, end_factor=0.9)

# scheduler2 = lr_scheduler.ExponentialLR(

# optim, gamma=0.99)

scheduler2 = lr_scheduler.OneCycleLR(

optim, max_lr=0.001, epochs=10, steps_per_epoch=10, pct_start=0.1)

scheduler = torch.optim.lr_scheduler.ChainedScheduler([scheduler1, scheduler2])

# scheduler = lr_scheduler.ExponentialLR(

# optim, gamma=0.95)

lrs = get_lr_scheduler(optim, scheduler, 100)

plt.plot(lrs)

plt.title('plot_ChainedScheduler')

plt.show()

plot_ChainedScheduler()

SequentialLR

torch.optim.lr_scheduler.SequentialLR(optimizer, schedulers, milestones, last_epoch=-1, verbose=‘deprecated’)

接收预期在优化过程中顺序调用的调度器列表,以及提供确切间隔的里程碑点,这些里程碑点指明在给定周期应该调用哪个调度器。

<code>def plot_SequentialLR():

plt.clf()

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler1 = lr_scheduler.LinearLR(

optim, start_factor=0.2, total_iters=10, end_factor=0.9)

scheduler2 = lr_scheduler.OneCycleLR(

optim, max_lr=0.01, epochs=10, steps_per_epoch=10, pct_start=0.1)

scheduler = torch.optim.lr_scheduler.SequentialLR(optim,[scheduler1, scheduler2],milestones = [10])

# scheduler = lr_scheduler.ExponentialLR(

# optim, gamma=0.95)

lrs = get_lr_scheduler(optim, scheduler, 100)

plt.plot(lrs)

plt.title('SequentialLR')

plt.show()

plot_SequentialLR()

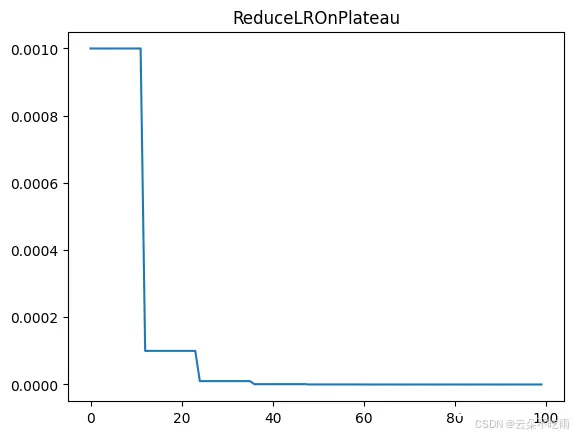

ReduceLROnPlateau

torch.optim.lr_scheduler.ReduceLROnPlateau(optimizer, mode=‘min’, factor=0.1, patience=10, threshold=0.0001, threshold_mode=‘rel’, cooldown=0, min_lr=0, eps=1e-08, verbose=‘deprecated’)

当性能不再提升时降低学习率”。这是一种学习率调度策略,用于在模型的某个性能指标(如验证集上的损失)不再显著改善时减小学习率,以此来微调模型并防止过拟合。

<code>def plot_ReduceLROnPlateau():

plt.clf()

optim = torch.optim.Adam(model.parameters(), lr=initial_lr)

scheduler = lr_scheduler.ReduceLROnPlateau(optim, mode='min')code>

lrs = get_lr_scheduler1(optim, scheduler, 100)

plt.plot(lrs)

plt.title('ReduceLROnPlateau')

plt.show()

plot_ReduceLROnPlateau()

reference1

reference2

上一篇: 【机器学习】生成对抗网络(Generative Adversarial Networks, GANs)详解

下一篇: 面向Data+AI时代的数据湖创新与优化(附Iceberg案例)

本文标签

声明

本文内容仅代表作者观点,或转载于其他网站,本站不以此文作为商业用途

如有涉及侵权,请联系本站进行删除

转载本站原创文章,请注明来源及作者。