Kubernetes(K8S)集群部署

何苏三月 2024-06-29 17:07:04 阅读 52

目录

一、创建3台虚拟机

二、为每台虚拟机安装Docker

三、安装kubelet

3.1 安装要求

3.2 为每台服务器完成前置设置

3.3 为每台服务器安装kubelet、kubeadm、kubectl

四、使用kubeadm引导集群

4.1 master服务器

4.2 node1、node2服务器

4.3 初始化主节点

4.4 work节点加入集群

五、token过期怎么办?

六、安装可视化界面dashboard

6.1 安装

6.2 暴露端口

6.3 访问web界面

6.4 创建访问账号

6.5 生成令牌

6.6 登录

七、写在后面的话

一、创建3台虚拟机

具体操作步骤可以参考之前的教程,建议是先安装一台,然后克隆虚拟机,这样速度快。

注意:在克隆时记得修改Mac地址、IP地址、UUID和主机名。(最后别忘了保存下快照~)

安装VMware虚拟机、Linux系统(CentOS7)_何苏三月的博客-CSDN博客

克隆Linux系统(centos)_linux克隆_何苏三月的博客-CSDN博客

二、为每台虚拟机安装Docker

请参考:Docker安装、常见命令、安装常见容器(Mysql、Redis等)_docker redis 容器_何苏三月的博客-CSDN博客

教程中安装docker的命令

yum install docker-ce docker-ce-cli containerd.io

原来是默认安装最新版,这里需要指定一下版本,目的是保障后续安装k8s不出问题:

yum install -y docker-ce-20.10.7 docker-ce-cli-20.10.7 containerd.io-1.4.6

其他步骤不变。

三、安装kubelet

3.1 安装要求

一台兼容的 Linux 主机。Kubernetes 项目为基于 Debian 和 Red Hat 的 Linux 发行版以及一些不提供包管理器的发行版提供通用的指令。

每台机器 2 GB 或更多的 RAM (如果少于这个数字将会影响你应用的运行内存)

2 CPU 核或更多

集群中的所有机器的网络彼此均能相互连接(公网和内网都可以)

设置防火墙放行规则

节点之中不可以有重复的主机名、MAC 地址或 product_uuid。请参见这里了解更多详细信息。

设置不同hostname

开启机器上的某些端口。请参见这里 了解更多详细信息。

内网互信

禁用交换分区。为了保证 kubelet 正常工作,你 必须 禁用交换分区。

永久关闭

3.2 为每台服务器完成前置设置

#各个机器设置自己的域名

hostnamectl set-hostname xxxx

# 将 SELinux 设置为 permissive 模式(相当于将其禁用)

sudo setenforce 0

sudo sed -i 's/^SELINUX=enforcing$/SELINUX=permissive/' /etc/selinux/config

#关闭swap

swapoff -a

sed -ri 's/.*swap.*/#&/' /etc/fstab

#允许 iptables 检查桥接流量

cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf

br_netfilter

EOF

cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sudo sysctl --system

3.3 为每台服务器安装kubelet、kubeadm、kubectl

kubelet - “厂长”

kubectl - 程序员敲命令行的命令窗

kubeadm - 引导创建集群的

# 1.先配置K8S去哪儿下载的地址信息

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

exclude=kubelet kubeadm kubectl

EOF

# 2. 安装

sudo yum install -y kubelet-1.20.9 kubeadm-1.20.9 kubectl-1.20.9 --disableexcludes=kubernetes

# 3. 启动kubelet

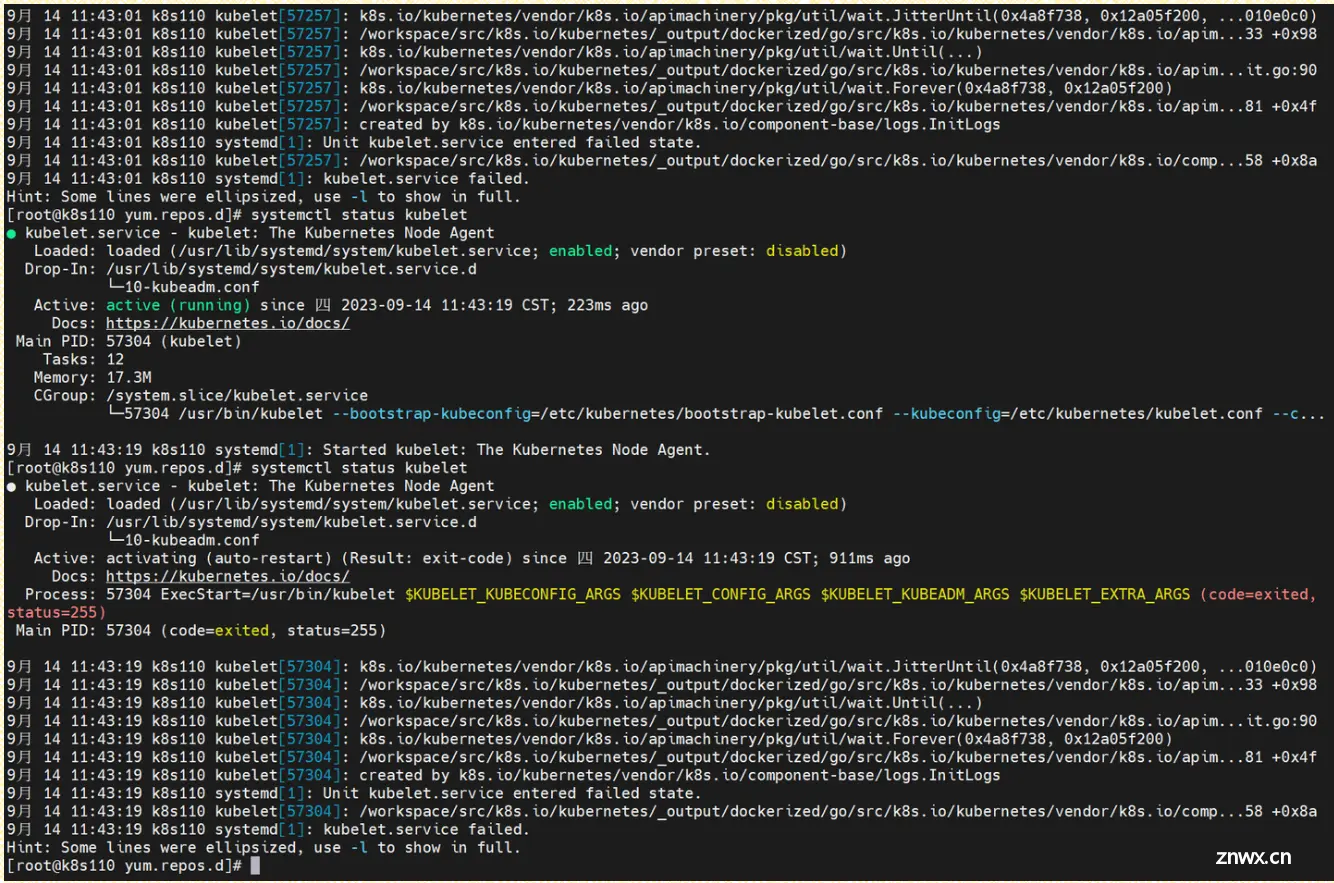

sudo systemctl enable --now kubelet

💡systemctl status kubelet 查看状态会发现,kubelet 现在每隔几秒就会重启,因为它陷入了一个等待 kubeadm 指令的死循环。这是正常现象不用管!

四、使用kubeadm引导集群

4.1 master服务器

下载各个机器需要的镜像,以下只需要在master机器上执行:

# 1. 定义一个for循环,需要的东西下载

sudo tee ./images.sh <<-'EOF'

#!/bin/bash

images=(

kube-apiserver:v1.20.9

kube-proxy:v1.20.9

kube-controller-manager:v1.20.9

kube-scheduler:v1.20.9

coredns:1.7.0

etcd:3.4.13-0

pause:3.2

)

for imageName in ${images[@]} ; do

docker pull registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/$imageName

done

EOF

# 2. 赋予权限,让它下载这些东西

chmod +x ./images.sh && ./images.sh

4.2 node1、node2服务器

从图上可以知道,从节点也需要安装kube-proxy。我们可以只下载这个镜像,当然了为了避免出现意外,我们也可以都下载下来。

方法完全参考4.1

4.3 初始化主节点

1.首先给所有的服务器都添加一下k8s110这台服务器的域名映射

#所有机器添加master域名映射,以下需要修改为自己的内网ip地址

echo "192.168.37.110 cluster-endpoint" >> /etc/hosts

2.然后只在k8s110这台服务器上执行主节点初始化过程:

#主节点初始化

kubeadm init \

--apiserver-advertise-address=192.168.37.110 \

--control-plane-endpoint=cluster-endpoint \

--image-repository registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images \

--kubernetes-version v1.20.9 \

--service-cidr=10.96.0.0/16 \

--pod-network-cidr=192.168.50.0/24

#要求所有网络范围不重叠 --pod-network-cidr --service-cidr --apiserver-advertise-address 都不能重叠

如果出现上述错误,则执行如下命令:

sysctl -w net.ipv4.ip_forward=1

然后重新执行

最后看到如下画面则表示初始化成功!

这段还比较重要的,它告诉我们怎么使用这个集群信息等等,所以我们把文本单独复制出来

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of control-plane nodes by copying certificate authorities

and service account keys on each node and then running the following as root:

kubeadm join cluster-endpoint:6443 --token 3e54se.alzs9d1mkf30f25w \

--discovery-token-ca-cert-hash sha256:689c076e294bdbb588103a51aaa7248b8a0df34bde634a6189d311ad46a02856 \

--control-plane

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join cluster-endpoint:6443 --token 3e54se.alzs9d1mkf30f25w \

--discovery-token-ca-cert-hash sha256:689c076e294bdbb588103a51aaa7248b8a0df34bde634a6189d311ad46a02856

那么按照它的要求一步一步执行吧!

3.按要求创建文件夹复制文件给予权限等操作

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

然后我们查看一下集群的所有结点:

#查看集群所有节点

kubectl get nodes

发现k8s110这台服务器就是master节点了,但是它的状态是NotReady。

没关系按照它的要求继续执行,下一步说需要安装一个网络插件。

4.安装网络插件

可以有多种安装选择,我们就用calico

curl https://docs.projectcalico.org/v3.20/manifests/calico.yaml -O

下载成功,我们calico.yaml配置文件就有了。

重要提示💡:如果我们在初始化主节点时,修改了--pod-network-cidr=192.168.0.0/16,那么我们就要进入这个配置文件,将我们修改后的ip地址写上去。

ok,有了这个配置文件,就可以通过如下命令为k8s安装calico插件所需要的东西了

#根据配置文件,给集群创建资源(以后通过该命令为k8s创建资源,不限于calico)

kubectl apply -f calico.yaml

然后执行命令安装calico网络插件

如果出现上述提示,说明我们的yaml文件有可能换行符搞错了等等。

再重新下载就好了。

我们如何查看集群部署了哪些应用呢?

# 查看集群部署了哪些应用

docker ps

# 等价于

kubectl get pods -A

# 运行中的应用在docker里面叫容器,在k8s里面叫Pod,至于为什么,后续再讲

以上,master节点就准备就绪了!

4.4 work节点加入集群

前面初始化主节点成功后的提示中有步骤:

kubeadm join cluster-endpoint:6443 --token 3e54se.alzs9d1mkf30f25w \

--discovery-token-ca-cert-hash sha256:689c076e294bdbb588103a51aaa7248b8a0df34bde634a6189d311ad46a02856

我们只需要将它在另外两台服务器各自执行即可。

如果加入报错,请查看是否已经关闭了防火墙,确保关闭防火墙然后执行:

sysctl -w net.ipv4.ip_forward=1

ok,然后去master查看一下节点信息。

我们也可以通过linux的命令 watch -n 1 kubectl get pods -A,每一秒查看一下状态

OK了,我们再看看

至此,K8S集群就跑起来了。

五、token过期怎么办?

token超过24小时就失效了,如果我们还没有加入从节点,或者想加入新的从节点,可以在master节点执行如下命令,让它重新生成

kubeadm token create --print-join-command

六、安装可视化界面dashboard

6.1 安装

kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.3.1/aio/deploy/recommended.yaml

如果网络不好安装不了就复制下面的,将它写入到一个yaml文件中,然后执行该文件即可:

# Copyright 2017 The Kubernetes Authors.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

apiVersion: v1

kind: Namespace

metadata:

name: kubernetes-dashboard

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

ports:

- port: 443

targetPort: 8443

selector:

k8s-app: kubernetes-dashboard

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-certs

namespace: kubernetes-dashboard

type: Opaque

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-csrf

namespace: kubernetes-dashboard

type: Opaque

data:

csrf: ""

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-key-holder

namespace: kubernetes-dashboard

type: Opaque

---

kind: ConfigMap

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-settings

namespace: kubernetes-dashboard

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

rules:

# Allow Dashboard to get, update and delete Dashboard exclusive secrets.

- apiGroups: [""]

resources: ["secrets"]

resourceNames: ["kubernetes-dashboard-key-holder", "kubernetes-dashboard-certs", "kubernetes-dashboard-csrf"]

verbs: ["get", "update", "delete"]

# Allow Dashboard to get and update 'kubernetes-dashboard-settings' config map.

- apiGroups: [""]

resources: ["configmaps"]

resourceNames: ["kubernetes-dashboard-settings"]

verbs: ["get", "update"]

# Allow Dashboard to get metrics.

- apiGroups: [""]

resources: ["services"]

resourceNames: ["heapster", "dashboard-metrics-scraper"]

verbs: ["proxy"]

- apiGroups: [""]

resources: ["services/proxy"]

resourceNames: ["heapster", "http:heapster:", "https:heapster:", "dashboard-metrics-scraper", "http:dashboard-metrics-scraper"]

verbs: ["get"]

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

rules:

# Allow Metrics Scraper to get metrics from the Metrics server

- apiGroups: ["metrics.k8s.io"]

resources: ["pods", "nodes"]

verbs: ["get", "list", "watch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: kubernetes-dashboard

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: kubernetes-dashboard

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: kubernetes-dashboard

template:

metadata:

labels:

k8s-app: kubernetes-dashboard

spec:

containers:

- name: kubernetes-dashboard

image: kubernetesui/dashboard:v2.3.1

imagePullPolicy: Always

ports:

- containerPort: 8443

protocol: TCP

args:

- --auto-generate-certificates

- --namespace=kubernetes-dashboard

# Uncomment the following line to manually specify Kubernetes API server Host

# If not specified, Dashboard will attempt to auto discover the API server and connect

# to it. Uncomment only if the default does not work.

# - --apiserver-host=http://my-address:port

volumeMounts:

- name: kubernetes-dashboard-certs

mountPath: /certs

# Create on-disk volume to store exec logs

- mountPath: /tmp

name: tmp-volume

livenessProbe:

httpGet:

scheme: HTTPS

path: /

port: 8443

initialDelaySeconds: 30

timeoutSeconds: 30

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsUser: 1001

runAsGroup: 2001

volumes:

- name: kubernetes-dashboard-certs

secret:

secretName: kubernetes-dashboard-certs

- name: tmp-volume

emptyDir: {}

serviceAccountName: kubernetes-dashboard

nodeSelector:

"kubernetes.io/os": linux

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

---

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: dashboard-metrics-scraper

name: dashboard-metrics-scraper

namespace: kubernetes-dashboard

spec:

ports:

- port: 8000

targetPort: 8000

selector:

k8s-app: dashboard-metrics-scraper

---

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: dashboard-metrics-scraper

name: dashboard-metrics-scraper

namespace: kubernetes-dashboard

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: dashboard-metrics-scraper

template:

metadata:

labels:

k8s-app: dashboard-metrics-scraper

annotations:

seccomp.security.alpha.kubernetes.io/pod: 'runtime/default'

spec:

containers:

- name: dashboard-metrics-scraper

image: kubernetesui/metrics-scraper:v1.0.6

ports:

- containerPort: 8000

protocol: TCP

livenessProbe:

httpGet:

scheme: HTTP

path: /

port: 8000

initialDelaySeconds: 30

timeoutSeconds: 30

volumeMounts:

- mountPath: /tmp

name: tmp-volume

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsUser: 1001

runAsGroup: 2001

serviceAccountName: kubernetes-dashboard

nodeSelector:

"kubernetes.io/os": linux

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

volumes:

- name: tmp-volume

emptyDir: {}

6.2 暴露端口

kubectl edit svc kubernetes-dashboard -n kubernetes-dashboard

type: ClusterIP 改为 type: NodePort

相当于docker中将内部的端口映射到linux的某个端口

找到放行的端口

kubectl get svc -A |grep kubernetes-dashboard

## 如果是云服务器,找到端口,在安全组放行

6.3 访问web界面

访问: https://集群任意IP:端口 https://192.168.37.110:31820/

发现虚拟机部署的无法访问,云服务器则没有问题。

我后面试了试Google、Edge都不行,华为浏览器、火狐浏览器可以访问到:

具体怎么解决,这个我放到后面有时间再处理吧~

6.4 创建访问账号

创建一个配置文件dash-usr.yaml

#创建访问账号,准备一个yaml文件; vi dash.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin-user

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin-user

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kubernetes-dashboard

6.5 生成令牌

这个可视化界面是通过令牌登录的,我们可以通过如下命令生成访问令牌:

#获取访问令牌

kubectl -n kubernetes-dashboard get secret $(kubectl -n kubernetes-dashboard get sa/admin-user -o jsonpath="{.secrets[0].name}") -o go-template="{ {.data.token | base64decode}}"

6.6 登录

七、写在后面的话

值得注意的是,我们的这些kubectl命令都需要在master节点上运行,别整岔劈了!

另外,我们重启后,k8s集群正常来说就会自动启动,只不过部分应用需要时间慢慢启动,等他们全部running

如果发现没有重启成功,那么检查一下swap交换分区是不是没有关闭?防火墙是不是打开了?docker是不是都启动了?

遇事不要慌,逐一进行排查,这也是处理问题的能力。我在搭建的过程中,也遇到了不少的坑,正常。不过只要严格按照上述过程执行,我相信问题不大的。

好了,我们下一个阶段见!

声明

本文内容仅代表作者观点,或转载于其他网站,本站不以此文作为商业用途

如有涉及侵权,请联系本站进行删除

转载本站原创文章,请注明来源及作者。