打造高效日志分析链:从Filebeat采集到Kibana可视化——Redis+Logstash+Elasticsearch全链路解决方案

团儿. 2024-08-19 13:37:01 阅读 88

作者简介:我是团团儿,是一名专注于云计算领域的专业创作者,感谢大家的关注 座右铭:云端筑梦,数据为翼,探索无限可能,引领云计算新纪元 个人主页:团儿.-CSDN博客

目录

实验目标:构建filebeat+redis+logstash+es+kibana架构,实现elk访问

实验拓扑:es 192.168.8.5

Nginx 192.168.8.6

Logstash 192.168.8.7

es主机:192.168.8.5

nginx主机:192.168.8.6

logstash主机192.168.8.7:

实验目标:构建filebeat+redis+logstash+es+kibana架构,实现elk访问

实验拓扑:es 192.168.8.5

Nginx 192.168.8.6

Logstash 192.168.8.7

es主机:192.168.8.5

1.安装elasticsearch:

前提:jdk-1.8.0

复制elasticsearch-6.6.0.rpm到虚拟机

rpm -ivh elasticsearch-6.6.0.rpm

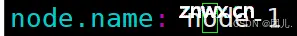

2.修改配置文件:

vim /etc/elasticsearch/elasticsearch.yml

node.name: node-1

path.data: /data/elasticsearch

path.logs: /var/log/elasticsearch

bootstrap.memory_lock: true

network.host: 192.168.8.10,127.0.0.1

http.port: 9200

3.创建数据目录,并修改权限

mkdir -p /data/elasticsearch

chown -R elasticsearch.elasticsearch /data/elasticsearch/

4.分配锁定内存:

vim /etc/elasticsearch/jvm.options

-Xms1g #分配最小内存

-Xmx1g #分配最大内存,官方推荐为物理内存的一半,但最大为32G

5.修改锁定内存后,无法重启,解决方法如下:

systemctl edit elasticsearch

添加:

[Service]

LimitMEMLOCK=infinity

F2保存退出

systemctl daemon-reload

systemctl restart elasticsearch

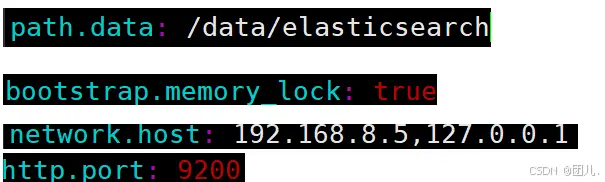

6.查看

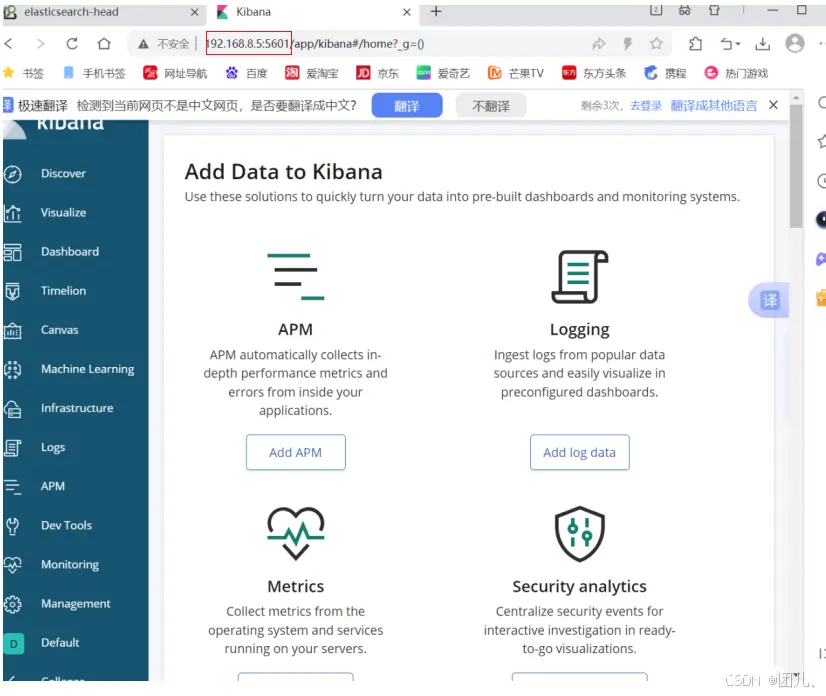

7.安装kibana

rpm -ivh kibana-6.6.0-x86_64.rpm

8.修改配置文件

vim /etc/kibana/kibana.yml

修改:

server.port: 5601

server.host: "192.168.8.10"

server.name: "db01" #自己所在主机的主机名

elasticsearch.hosts: ["http://192.168.8.10:9200"] #es服务器的ip,便于接收日志数据

保存退出

9.启动kibana

systemctl start kibana

10.配置yum源,安装 httpd-tools

yum -y install httpd-tools

11.使用ab压力测试工具测试访问

ab -c 1000 -n 10000 http://192.168.8.6/

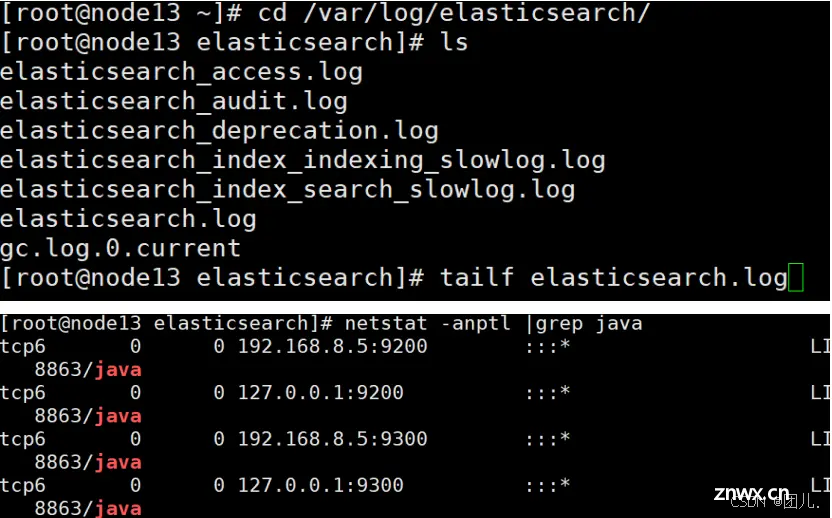

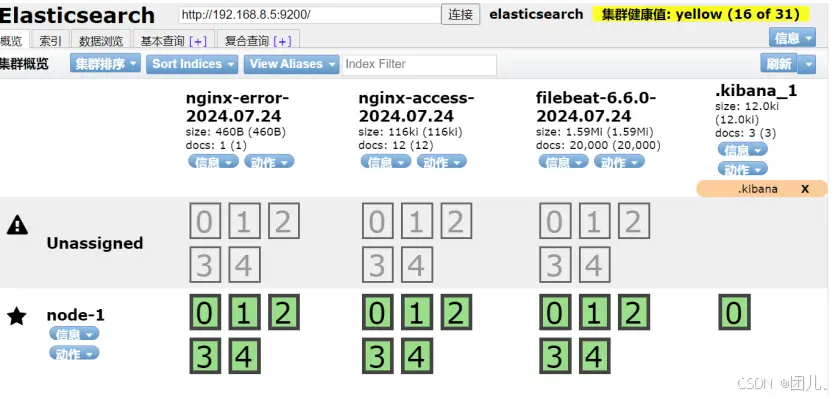

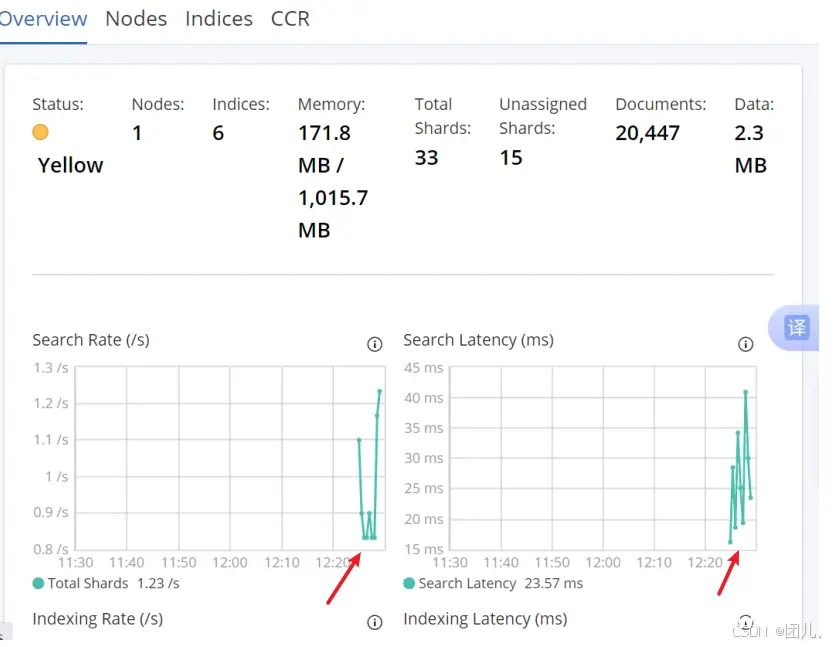

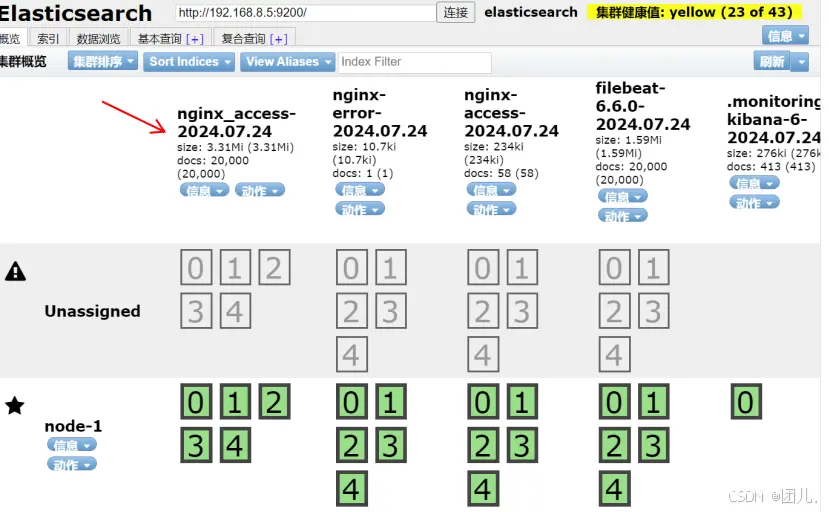

12.在es浏览器查看filebeat索引和数据

13.在kibana添加索引

management--create index

discover--右上角--选择today

nginx主机:192.168.8.6

1.安装filebeat

rpm -ivh filebeat-6.6.0-x86_64.rpm

2.修改配置文件

vim /etc/filebeat/filebeat.ym

修改:

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/nginx/access.log

output.elasticsearch:

hosts: ["192.168.8.5:9200"]

保存退出

3.启动filebeat

systemctl start filebeat

4.安装nginx并启动服务

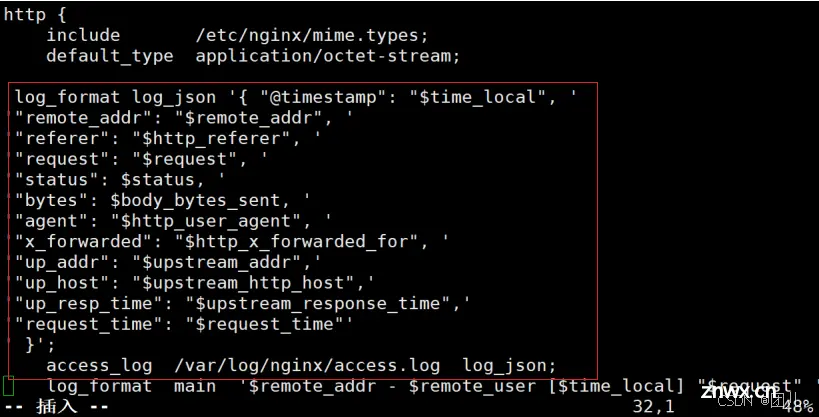

5.修改nginx的日志格式为json

vim /etc/nginx/nginx.conf

添加在http {}内:

<code> log_format log_json '{ "@timestamp": "$time_local", '

'"remote_addr": "$remote_addr", '

'"referer": "$http_referer", '

'"request": "$request", '

'"status": $status, '

'"bytes": $body_bytes_sent, '

'"agent": "$http_user_agent", '

'"x_forwarded": "$http_x_forwarded_for", '

'"up_addr": "$upstream_addr",'

'"up_host": "$upstream_http_host",'

'"up_resp_time": "$upstream_response_time",'

'"request_time": "$request_time"'

' }';

access_log /var/log/nginx/access.log log_json;

保存退出

systemctl restart nginx

6.清空日志:vim /var/log/nginx/access.log

ab测试访问,生成json格式日志

7.配置access.log和error.log分开

vim /etc/filebeat/filebeat.yml

修改为:

<code>filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/nginx/access.log

json.keys_under_root: true

json.overwrite_keys: true

tags: ["access"]

- type: log

enabled: true

paths:

- /var/log/nginx/error.log

tags: ["error"]

output.elasticsearch:

hosts: ["192.168.8.5:9200"]

indices:

- index: "nginx-access-%{+yyyy.MM.dd}"

when.contains:

tags: "access"

- index: "nginx-error-%{+yyyy.MM.dd}"

when.contains:

tags: "error"

setup.template.name: "nginx"

setup.template.patten: "nginx-*"

setup.template.enabled: false

setup.template.overwrite: true

保存退出

重启服务:systemctl restart filebeat

登录kibana:

1.访问

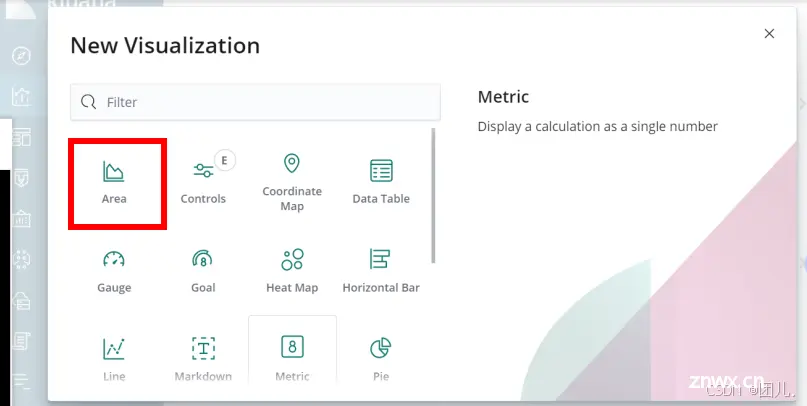

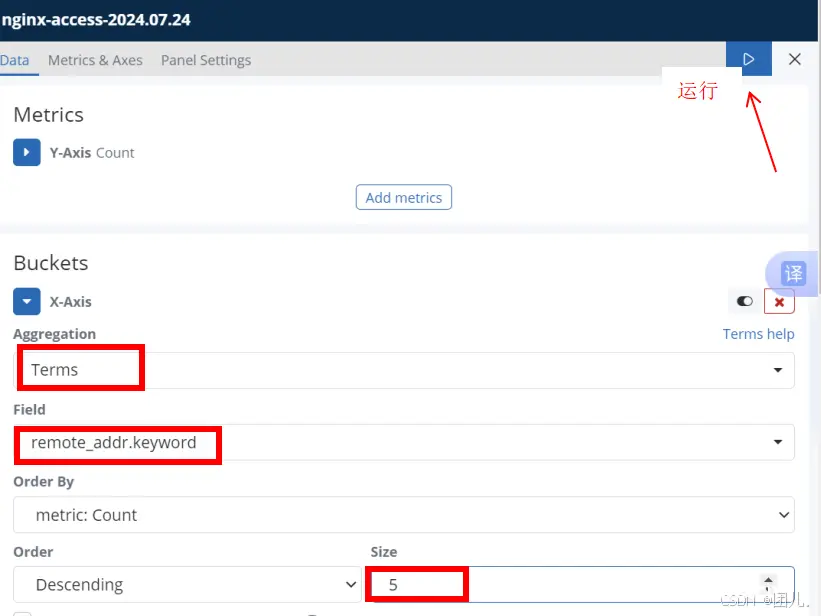

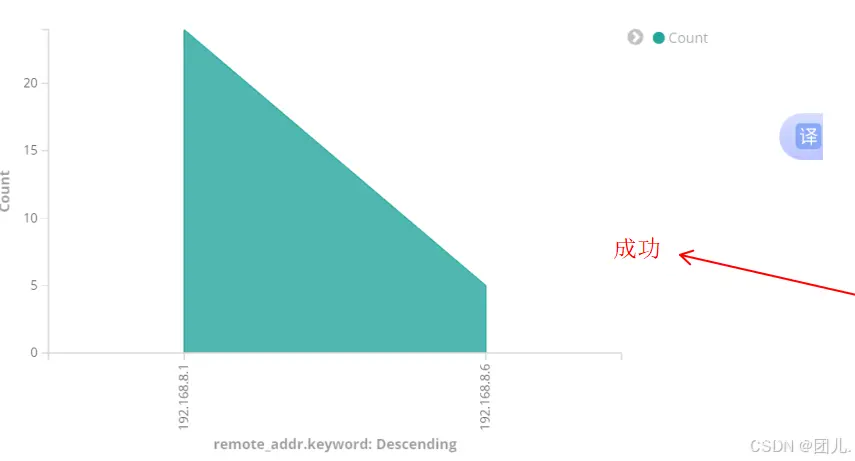

2.登录--左侧面板选择visualize--点击“+”号--选择图表类型--选择索引--Buckets--x-Axis--Aggregation(选择Terms)--

Field(remote_addr.keyword)--size(5)--点击上方三角标志

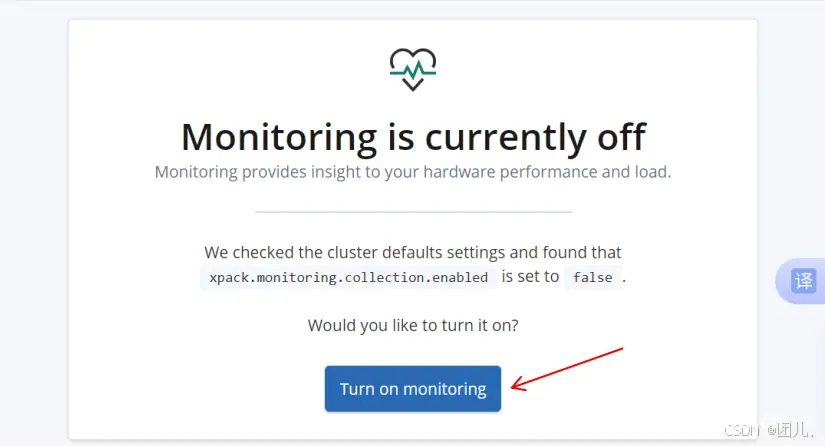

kibana监控(x-pack):

登录--左侧面板选择--Monitoring--启用监控

8.Redis配置完成后修改filebeat配置文件,output给redis

vim /etc/filebeat/filebeat.yml

<code>filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/nginx/access.log

json.keys_under_root: true

json.overwrite_keys: true

tags: ["access"]

- type: log

enabled: true

paths:

- /var/log/nginx/error.log

tags: ["error"]

setup.template.settings:

index.number_of_shards: 3

setup.kibana:

output.redis:

hosts: ["192.168.8.7"]

key: "filebeat"

db: 0

timeout: 5

保存退出

重启服务:systemctl restart filebeat

logstash主机192.168.8.7

1.安装redis,并启动

准备安装和数据目录

mkdir -p /data/soft

mkdir -p /opt/redis_cluster/redis_6379/{conf,logs,pid}

下载redis安装包

cd /data/soft

wget http://download.redis.io/releases/redis-5.0.7.tar.gz

2.解压redis到/opt/redis_cluster/

tar xf redis-5.0.7.tar.gz -C /opt/redis_cluster/

ln -s /opt/redis_cluster/redis-5.0.7 /opt/redis_cluster/redis

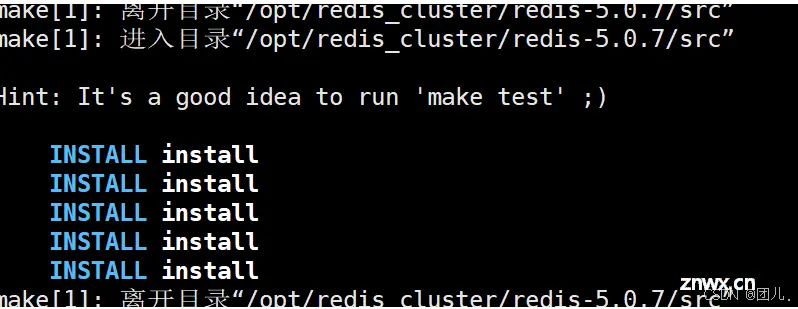

3.切换目录安装redis

cd /opt/redis_cluster/redis

make && make install

4.编写配置文件

vim /opt/redis_cluster/redis_6379/conf/6379.conf

添加:

<code>bind 127.0.0.1 192.168.8.7

port 6379

daemonize yes

pidfile /opt/redis_cluster/redis_6379/pid/redis_6379.pid

logfile /opt/redis_cluster/redis_6379/logs/redis_6379.log

databases 16

dbfilename redis.rdb

dir /opt/redis_cluster/redis_6379

保存退出

5.启动当前redis服务

redis-server /opt/redis_cluster/redis_6379/conf/6379.conf

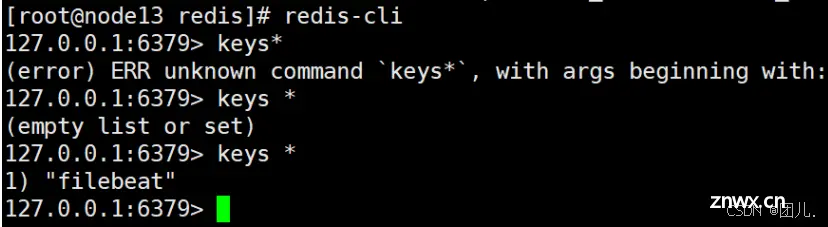

6.修改filebeat配置后登录redis

Es01上测试访问,redis查看

redis-cli #登录

keys * #列出所有键

type filebeat #filebeat为键值名

LLEN filebeat #查看list长度

LRANGE filebeat 0 -1 #查看list所有内容

7.安装logstash(安装包提前放在了/data/soft下)

cd /data/soft/

rpm -ivh logstash-6.6.0.rpm

8.修改logstash配置文件,实现access和error日志分离

注:mutate和grok是filter过滤选项,grok负责match正则过滤匹配,

mutate负责convert数据类型转换。

vim /etc/logstash/conf.d/redis.conf

添加:

<code>input {

redis {

host => "192.168.8.7"

port => "6379"

db => "0"

key => "filebeat"

data_type => "list"

}

}

filter {

mutate {

convert => ["upstream_time","float"]

convert => ["request_time","float"]

}

}

output {

stdout {}

if "access" in [tags] {

elasticsearch {

hosts => ["http://192.168.8.5:9200"]

index => "nginx_access-%{+YYYY.MM.dd}"

manage_template => false

}

}

if "error" in [tags] {

elasticsearch {

hosts => ["http://192.168.8.5:9200"]

index => "nginx_error-%{+YYYY.MM.dd}"

manage_template => false

}

}

}

保存退出

重启logstash:

/usr/share/logstash/bin/logstash -f /etc/logstash/conf.d/redis.conf

上一篇: 【ubuntu20.04】安装后如何进入图形化界面及可能遇到的问题

下一篇: 关闭开机自启动的几种方法

本文标签

打造高效日志分析链:从Filebeat采集到Kibana可视化——Redis+Logstash+Elasticsearch全链路解决方案

声明

本文内容仅代表作者观点,或转载于其他网站,本站不以此文作为商业用途

如有涉及侵权,请联系本站进行删除

转载本站原创文章,请注明来源及作者。