Tensorrt安装及使用(python版本)

stivory 2024-06-23 15:05:11 阅读 89

官方的教程

tensorrt的安装:Installation Guide :: NVIDIA Deep Learning TensorRT Documentation

视频教程:TensorRT 教程 | 基于 8.6.1 版本 | 第一部分_哔哩哔哩_bilibili

代码教程:trt-samples-for-hackathon-cn/cookbook at master · NVIDIA/trt-samples-for-hackathon-cn (github.com)

Tensorrt的安装

官方的教程:

安装指南 :: NVIDIA Deep Learning TensorRT Documentation --- Installation Guide :: NVIDIA Deep Learning TensorRT Documentation

Tensorrt的安装方法主要有:

1、使用 pip install 进行安装;

2、下载 tar、zip、deb 文件进行安装;

3、使用docker容器进行安装:TensorRT Container Release Notes

Windows系统

首先选择和本机nVidia驱动、cuda版本、cudnn版本匹配的Tensorrt版本。

我使用的:cuda版本:11.4;cudnn版本:11.4

建议下载 zip 进行Tensorrt的安装,参考的教程:

windows安装tensorrt - 知乎 (zhihu.com)

Ubuntu系统

首先选择和本机nVidia驱动、cuda版本、cudnn版本匹配的Tensorrt版本。

我使用的:cuda版本:11.7;cudnn版本:8.9.0

1、使用 pip 进行安装:

pip install tensorrt==8.6.1

我这边安装失败

2、下载 deb 文件进行安装

os="ubuntuxx04" tag="8.x.x-cuda-x.x" sudo dpkg -i nv-tensorrt-local-repo-${os}-${tag}_1.0-1_amd64.deb sudo cp /var/nv-tensorrt-local-repo-${os}-${tag}/*-keyring.gpg /usr/share/keyrings/ sudo apt-get update sudo apt-get install tensorrt

我这边同样没安装成功

3、使用 tar 文件进行安装(推荐)

推荐使用这种方法进行安装,成功率较高

下载对应的版本:developer.nvidia.com/tensorrt-download

下载后

tar -xzvf TensorRT-8.6.1.6.Linux.x86_64-gnu.cuda-11.8.tar.gz # 解压文件 # 将lib添加到环境变量里面 vim ~/.bashrc export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:./TensorRT-8.6.1.6/lib source ~/.bashrc # 或 直接将 TensorRT-8.6.1.6/lib 添加到 cuda/lib64 里面 cp -r ./lib/* /usr/local/cuda/lib64/ # 安装python的包 cd TensorRT-8.6.1.6/python pip install tensorrt-xxx-none-linux_x86_64.whl

下载成功后验证:

# 验证是否安装成功: python >>>import tensorrt >>>print(tensorrt.__version__) >>>assert tensorrt.Builder(tensorrt.Logger())

如果没有报错说明安装成功

使用方法

我这边的使用的流程是:pytorch -> onnx -> tensorrt

选择resnet18进行转换

pytorch转onnx

安装onnx,onnxruntime安装一个就行

pip install onnx pip install onnxruntime pip install onnxruntime-gpu # gpu版本

将pytorch模型转成onnx模型

import torchimport torchvisionmodel = torchvision.models.resnet18(pretrained=False)device = 'cuda' if torch.cuda.is_available else 'cpu'dummy_input = torch.randn(1, 3, 224, 224, device=device)model.to(device)model.eval()output = model(dummy_input)print("pytorch result:", torch.argmax(output))import torch.onnxtorch.onnx.export(model, dummy_input, './model.onnx', input_names=["input"], output_names=["output"], do_constant_folding=True, verbose=True, keep_initializers_as_inputs=True, opset_version=14, dynamic_axes={"input": {0: "nBatchSize"}, "output": {0: "nBatchSize"}})# 一般情况# torch.onnx.export(model, torch.randn(1, c, nHeight, nWidth, device="cuda"), './model.onnx', input_names=["x"], output_names=["y", "z"], do_constant_folding=True, verbose=True, keep_initializers_as_inputs=True, opset_version=14, dynamic_axes={"x": {0: "nBatchSize"}, "z": {0: "nBatchSize"}})import onnximport numpy as npimport onnxruntime as ortmodel_onnx_path = './model.onnx'# 验证模型的合法性onnx_model = onnx.load(model_onnx_path)onnx.checker.check_model(onnx_model)# 创建ONNX运行时会话ort_session = ort.InferenceSession(model_onnx_path, providers=['CUDAExecutionProvider', 'CPUExecutionProvider'])# 准备输入数据input_data = { 'input': dummy_input.cpu().numpy()}# 运行推理y_pred_onnx = ort_session.run(None, input_data)print("onnx result:", np.argmax(y_pred_onnx[0]))

onnx转tensorrt

Window使用zip安装后使用 TensorrtRT-8.6.1.6/bin/trtexec.exe 文件生成 tensorrt 模型文件

Ubuntu使用tar安装后使用 TensorrtRT-8.6.1.6/bin/trtexec 文件生成 tensorrt 模型文件

./trtexec --onnx=model.onnx --saveEngine=model.trt --fp16 --workspace=16 --shapes=input:2x3x224x224

其中的参数:

--fp16:是否使用fp16

--shapes:输入的大小。tensorrt支持 动态batch 设置,感兴趣可以尝试

tensorrt的使用

nVidia的官方使用方法:

trt-samples-for-hackathon-cn/cookbook at master · NVIDIA/trt-samples-for-hackathon-cn (github.com)

打印转换后的tensorrt的模型的信息

import tensorrt as trt# 加载TensorRT引擎logger = trt.Logger(trt.Logger.INFO)with open('./model.trt', "rb") as f, trt.Runtime(logger) as runtime: engine = runtime.deserialize_cuda_engine(f.read())for idx in range(engine.num_bindings): name = engine.get_tensor_name(idx) is_input = engine.get_tensor_mode(name) op_type = engine.get_tensor_dtype(name) shape = engine.get_tensor_shape(name) print('input id: ',idx, '\tis input: ', is_input, '\tbinding name: ', name, '\tshape: ', shape, '\ttype: ', op_type)

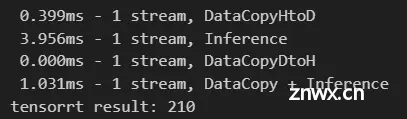

测试转换后的tensorrt模型,来自nVidia的 cookbook/08-Advance/MultiStream/main.py

from time import timeimport numpy as npimport tensorrt as trtfrom cuda import cudart # 安装 pip install cuda-pythonnp.random.seed(31193)nWarmUp = 10nTest = 30nB, nC, nH, nW = 1, 3, 224, 224data = dummy_input.cpu().numpy()def run1(engine): input_name = engine.get_tensor_name(0) output_name = engine.get_tensor_name(1) output_type = engine.get_tensor_dtype(output_name) output_shape = engine.get_tensor_shape(output_name) context = engine.create_execution_context() context.set_input_shape(input_name, [nB, nC, nH, nW]) _, stream = cudart.cudaStreamCreate() inputH0 = np.ascontiguousarray(data.reshape(-1)) outputH0 = np.empty(output_shape, dtype=trt.nptype(output_type)) _, inputD0 = cudart.cudaMallocAsync(inputH0.nbytes, stream) _, outputD0 = cudart.cudaMallocAsync(outputH0.nbytes, stream) # do a complete inference cudart.cudaMemcpyAsync(inputD0, inputH0.ctypes.data, inputH0.nbytes, cudart.cudaMemcpyKind.cudaMemcpyHostToDevice, stream) context.execute_async_v2([int(inputD0), int(outputD0)], stream) cudart.cudaMemcpyAsync(outputH0.ctypes.data, outputD0, outputH0.nbytes, cudart.cudaMemcpyKind.cudaMemcpyDeviceToHost, stream) cudart.cudaStreamSynchronize(stream) # Count time of memory copy from host to device for i in range(nWarmUp): cudart.cudaMemcpyAsync(inputD0, inputH0.ctypes.data, inputH0.nbytes, cudart.cudaMemcpyKind.cudaMemcpyHostToDevice, stream) trtTimeStart = time() for i in range(nTest): cudart.cudaMemcpyAsync(inputD0, inputH0.ctypes.data, inputH0.nbytes, cudart.cudaMemcpyKind.cudaMemcpyHostToDevice, stream) cudart.cudaStreamSynchronize(stream) trtTimeEnd = time() print("%6.3fms - 1 stream, DataCopyHtoD" % ((trtTimeEnd - trtTimeStart) / nTest * 1000)) # Count time of inference for i in range(nWarmUp): context.execute_async_v2([int(inputD0), int(outputD0)], stream) trtTimeStart = time() for i in range(nTest): context.execute_async_v2([int(inputD0), int(outputD0)], stream) cudart.cudaStreamSynchronize(stream) trtTimeEnd = time() print("%6.3fms - 1 stream, Inference" % ((trtTimeEnd - trtTimeStart) / nTest * 1000)) # Count time of memory copy from device to host for i in range(nWarmUp): cudart.cudaMemcpyAsync(outputH0.ctypes.data, outputD0, outputH0.nbytes, cudart.cudaMemcpyKind.cudaMemcpyDeviceToHost, stream) trtTimeStart = time() for i in range(nTest): cudart.cudaMemcpyAsync(outputH0.ctypes.data, outputD0, outputH0.nbytes, cudart.cudaMemcpyKind.cudaMemcpyDeviceToHost, stream) cudart.cudaStreamSynchronize(stream) trtTimeEnd = time() print("%6.3fms - 1 stream, DataCopyDtoH" % ((trtTimeEnd - trtTimeStart) / nTest * 1000)) # Count time of end to end for i in range(nWarmUp): context.execute_async_v2([int(inputD0), int(outputD0)], stream) trtTimeStart = time() for i in range(nTest): cudart.cudaMemcpyAsync(inputD0, inputH0.ctypes.data, inputH0.nbytes, cudart.cudaMemcpyKind.cudaMemcpyHostToDevice, stream) context.execute_async_v2([int(inputD0), int(outputD0)], stream) cudart.cudaMemcpyAsync(outputH0.ctypes.data, outputD0, outputH0.nbytes, cudart.cudaMemcpyKind.cudaMemcpyDeviceToHost, stream) cudart.cudaStreamSynchronize(stream) trtTimeEnd = time() print("%6.3fms - 1 stream, DataCopy + Inference" % ((trtTimeEnd - trtTimeStart) / nTest * 1000)) cudart.cudaStreamDestroy(stream) cudart.cudaFree(inputD0) cudart.cudaFree(outputD0) print("tensorrt result:", np.argmax(outputH0))if __name__ == "__main__": cudart.cudaDeviceSynchronize() f = open("./model.trt", "rb") # 读取trt模型 runtime = trt.Runtime(trt.Logger(trt.Logger.WARNING)) # 创建一个Runtime(传入记录器Logger) engine = runtime.deserialize_cuda_engine(f.read()) # 从文件中加载trt引擎 run1(engine) # do inference with single stream print(dummy_input.shape, dummy_input.dtype)

声明

本文内容仅代表作者观点,或转载于其他网站,本站不以此文作为商业用途

如有涉及侵权,请联系本站进行删除

转载本站原创文章,请注明来源及作者。