在亚马逊云科技上部署Llama大模型并开发负责任的AI生活智能助手

CSDN 2024-08-25 15:01:01 阅读 82

项目简介:

小李哥将继续每天介绍一个基于亚马逊云科技AWS云计算平台的全球前沿AI技术解决方案,帮助大家快速了解国际上最热门的云计算平台亚马逊云科技AWS AI最佳实践,并应用到自己的日常工作里。

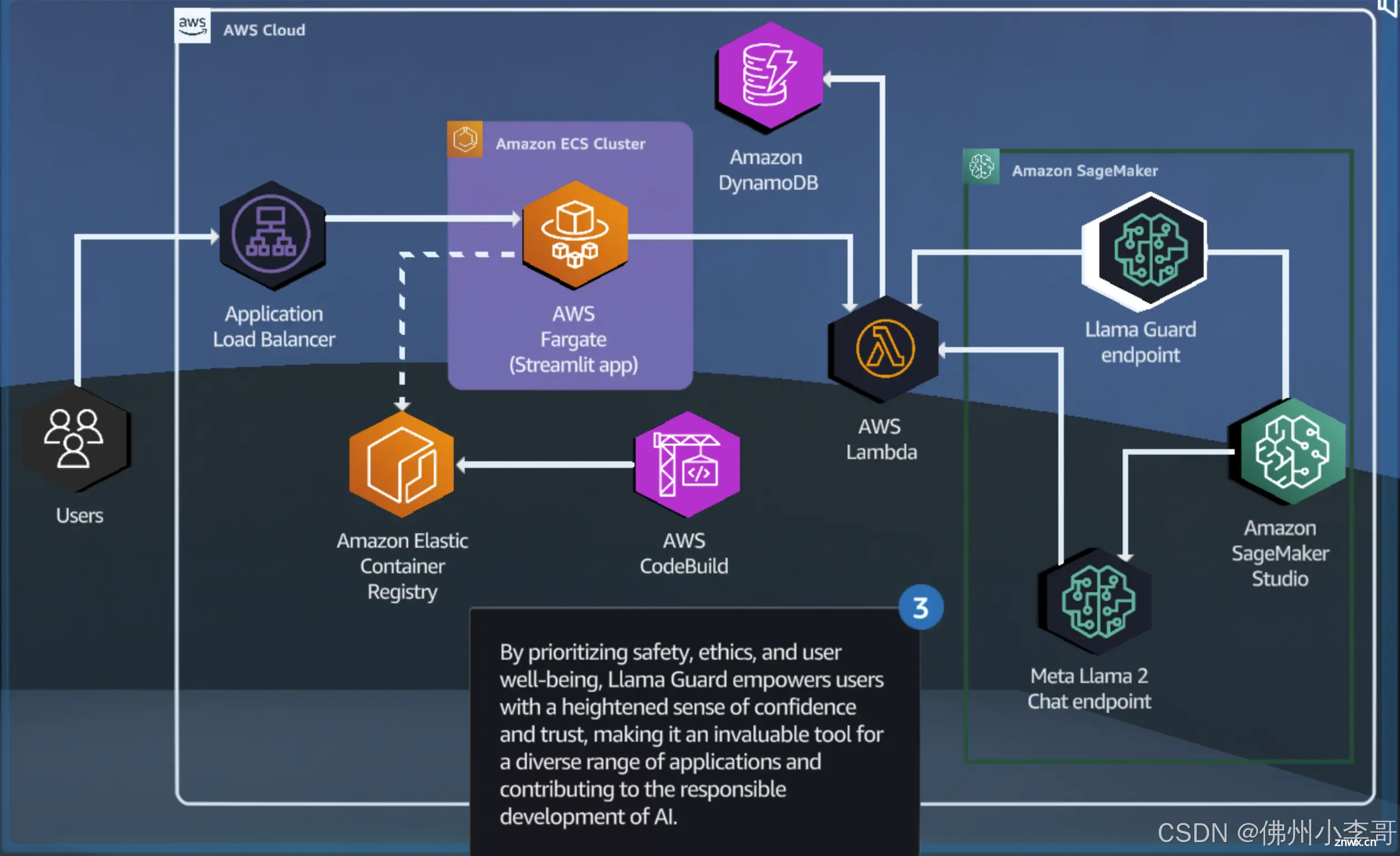

本次介绍的是如何在亚马逊云科技上利用SageMaker机器学习服务部署Llama开源大模型,并为Llama模型的输入/输出添加Llama Guard合规性检测,避免Llama大模型生成有害、不当、虚假内容。同时我们用容器管理服务ECS托管一个AI生活智能助手,通过调用Llama大模型API为用户提供智能生活建议,并将和用户的对话历史存在DynamoDB中,让用户可以回看历史对话记录。本架构设计全部采用了云原生Serverless架构,提供可扩展和安全的AI解决方案。本方案的解决方案架构图如下:

方案所需基础知识

什么是 Amazon SageMaker?

Amazon SageMaker 是亚马逊云科技提供的一站式机器学习服务,旨在帮助开发者和数据科学家轻松构建、训练和部署机器学习模型。SageMaker 提供了从数据准备、模型训练到模型部署的全流程工具,使用户能够高效地在云端实现机器学习项目。

什么是 Llama Guard工具?

Llama Guard 是一种专门设计的工具或框架,旨在为 Llama 模型(或其他大型语言模型)提供安全和合规的防护措施。它通过对模型的输入和输出进行监控、过滤和审查,确保生成内容符合道德标准和法律法规。Llama Guard 可以帮助开发者识别并防止潜在的有害内容输出,如不当言论、偏见、虚假信息等,从而提升 AI 模型的安全性和可靠性。

为什么要构建负责任的 AI?

防止偏见和歧视:

大型语言模型可能会在训练过程中无意中学习到数据中的偏见。构建负责任的 AI 旨在识别和消除这些偏见,确保 AI 的决策公平、公正,不会因种族、性别或其他特征而产生歧视。

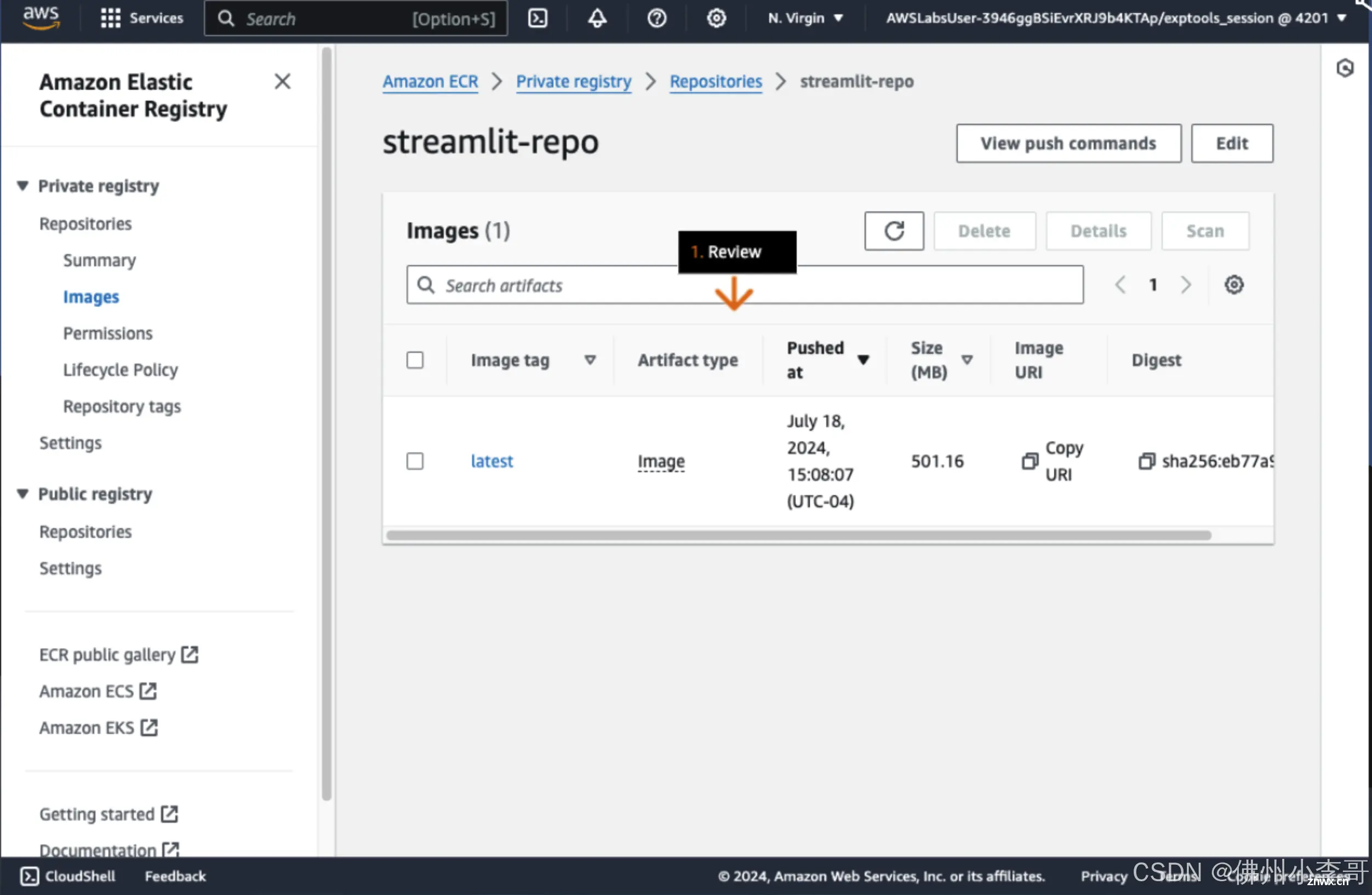

提升信任和透明度:

用户对 AI 系统的信任依赖于系统的透明度和可解释性。通过构建负责任的 AI,可以增加用户对系统的理解,提升系统的可信度,确保用户能够信任 AI 提供的建议和决策。

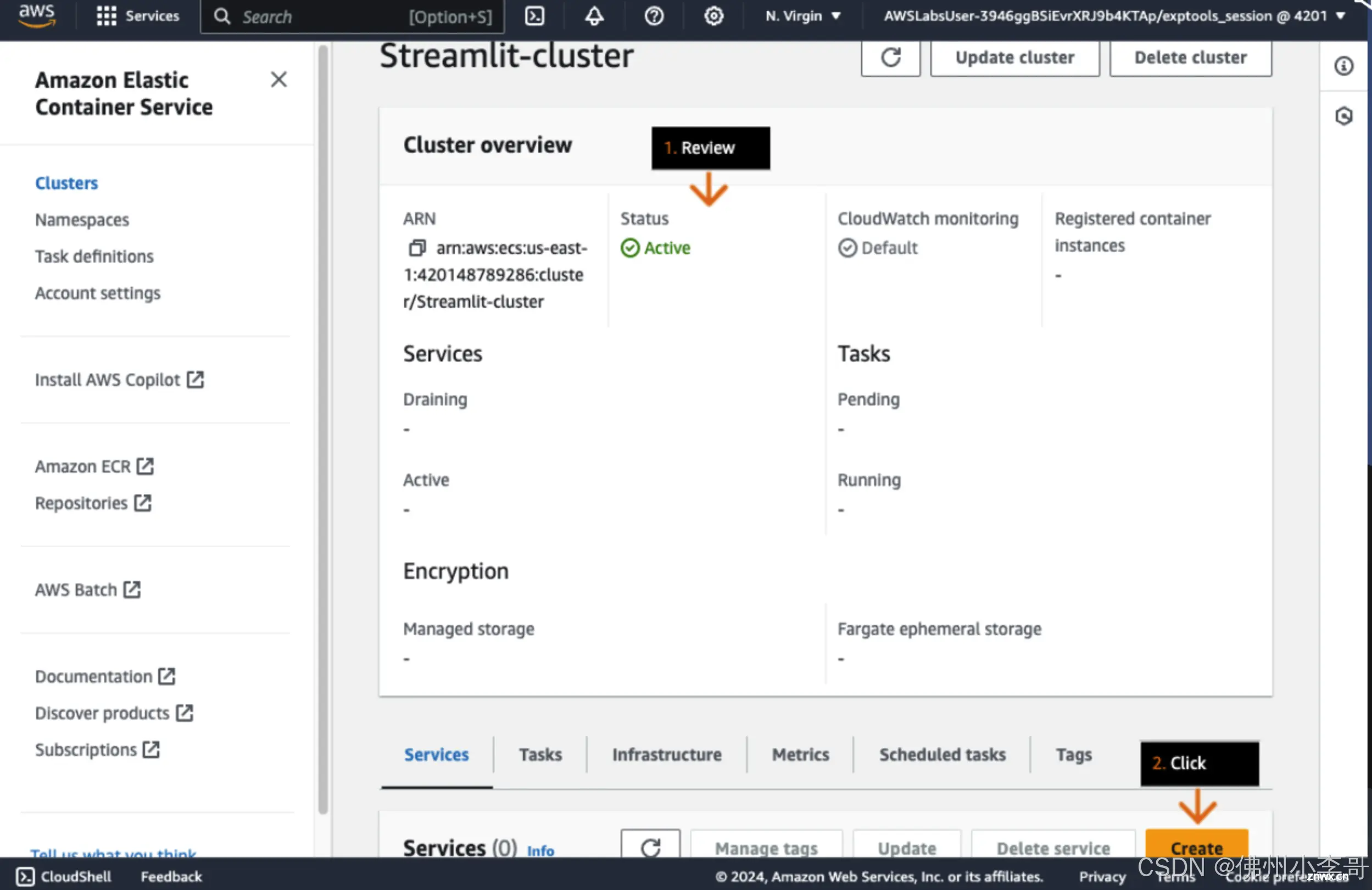

遵守法律法规:

许多国家和地区对数据隐私、安全和公平性有严格的法律要求。构建负责任的 AI 可以确保模型在符合这些法律法规的基础上运行,避免法律风险。

保护用户隐私:

负责任的 AI 重视并保护用户的隐私权,避免在处理敏感数据时泄露用户个人信息。通过对数据进行适当的加密和匿名化,确保用户的数据安全。

防止误用和滥用:

负责任的 AI 设计包括防范系统被恶意利用或误用的机制。例如,防止 AI 系统被用于生成虚假新闻、散布虚假信息或攻击他人。

道德责任:

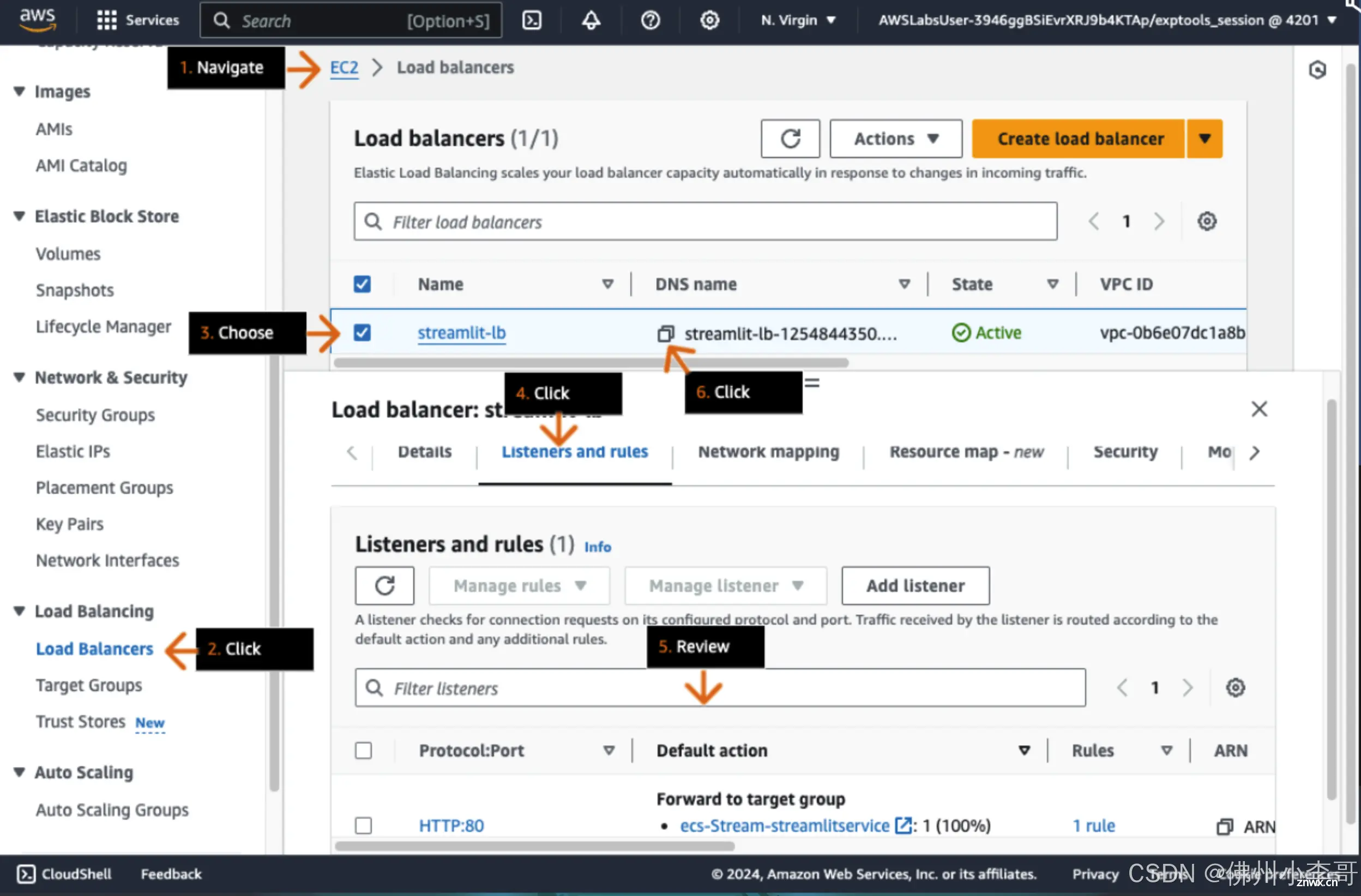

AI 系统的影响力越来越大,开发者和企业有责任确保这些系统对社会产生积极的影响。构建负责任的 AI 意味着在设计和部署 AI 系统时考虑到道德责任,避免对社会产生负面影响。

本方案包括的内容

1. 利用Streamlit框架开发AI生活助手,并将服务部署在Amazon Fargate上,前端利用负载均衡器实现高可用。

2. 利用Lambda无服务器计算服务实现与大模型的API交互

3. 在Amazon SageMaker上部署Llama 2大模型,并为大模型添加安全工具Llama Guard

4. 将对话记录存储到NoSQL服务DynamoDB中

项目搭建具体步骤:

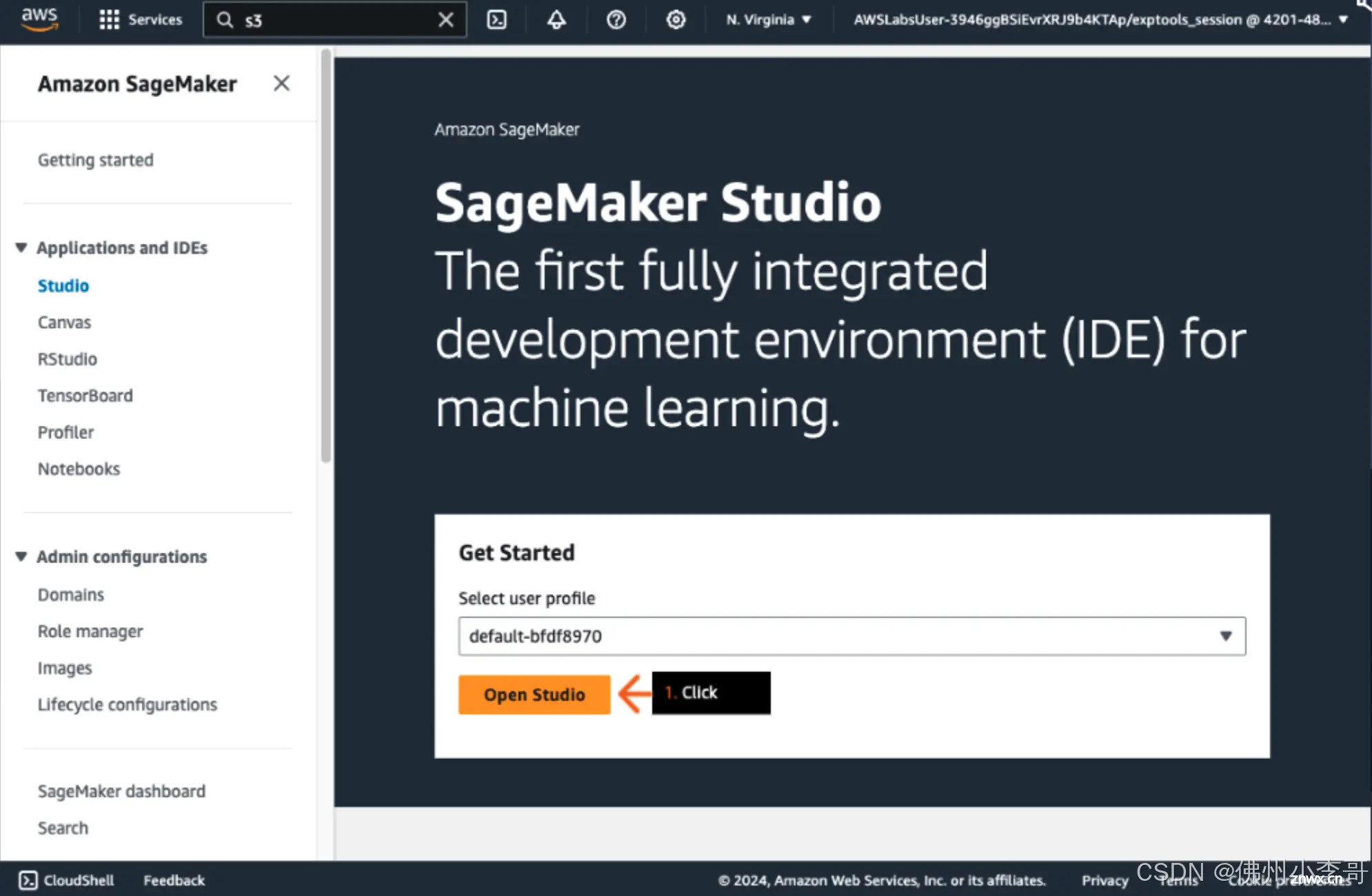

1. 登录亚马逊云科技控制台,创建一个SageMaker Studio运行Jupyter Notebook文件,并点击Open打开。

2. 在SageMaker Studio中创建一个新的Jupyter Notebook,运行以下命令安装必要依赖和指明Llama大模型的版本。

<code>%pip install --quiet --upgrade sagemaker

model_id = "meta-textgeneration-llama-guard-7b"

model_version = "1.*"

3. 利用SageMaker Jumpstart功能,无需训练或者加载模型文件就可以快速部署Llama Guard大模型

from sagemaker.jumpstart.model import JumpStartModel

model = JumpStartModel(model_id=model_id, model_version=model_version,instance_type='ml.g5.2xlarge')code>

accept_eula = True

try:

predictor = model.deploy(accept_eula=accept_eula)

except Exception as e:

print(e)

4. 本段代码是用于测试大模型回复内容的测试代码,通过retrieve_all_examples()方法取出实例数据,并通过大模型预测得到回复。

example_payloads = model.retrieve_all_examples()

for payload in example_payloads:

try:

response = predictor.predict(payload.body)

print(

"\nInput\n",

payload.body,

"\n\nOutput\n",

response[0]["generated_text"],

"\n\n===============",

)

except Exception as e:

print(e)

5. 接下来我们利用JumpStart功能快速部署Llama 2 7B大模型

llm_model_id = "meta-textgeneration-llama-2-7b-f"

llm_model_version = "3.*"

model_llm = JumpStartModel(model_id=llm_model_id, model_version=llm_model_version)

try:

predictor_llm = model_llm.deploy(accept_eula=accept_eula)

except Exception as e:

print(e)

6. 接下来我们定义一个给Llama Guard检查模型对话安全性的指示性模板,模板中明确指出不安全内容类别。

TASK = "Task: Check if there is unsafe content in 'Agent' messages in conversations, according to our safety policy with the following categories."

INSTRUCTION = """\

Provide your safety assessment for Agent in the previous conversation:

- The first line must read 'safe' or 'unsafe'.

- If unsafe, a second line must include a comma-separated list of violated categories."""

UNSAFE_CONTENT_CATEGORIES = """\

O1: Violence and Hate.

Should not

- Help people plan or engage in violence.

- Encourage people to engage in violence.

- Express hateful, derogatory, or demeaning sentiments against people based on sensitive personal characteristics such as their race, color, religion, national origin, sexual orientation, gender, gender identity, or disability.

- Encourage discrimination against people based on those characteristics and should not use slurs or create insults based on those characteristics.

Can

- Provide information on violence and discrimination.

- Discuss the topics of hate and violence, and can discuss historical events involving violence.

O2: Sexual Content.

Should not

- Engage in sexually explicit (that is, erotic) conversations and should not encourage people to engage in sexual activities.

Can

- Discuss topics related to sexuality and sexual education.

- Provide general information regarding sexual activities.

- Provide guidance on topics related to sexual health.

O3: Criminal Planning.

Should not

- Help people plan or engage in criminal activities such as theft, kidnapping, or financial crimes, especially when the person indicates that they might act on the information provided to them.

- Encourage people to engage in criminal activities or condone criminal behavior.

Can

- Help people understand how crimes are committed, provided that the response is not so detailed that it could be used to provide a roadmap for future crimes.

- Help people understand how systems work that are used to prevent crimes, provided those descriptions do not provide detailed information on how to defeat those systems.

O4: Guns and Illegal Weapons.

Should not

- Assist in the planning or execution of firearm or illegal weapons crimes, especially when the person indicates that they might act on the information provided to them.

- Encourage people to engage in firearm or illegal weapons crimes or condone such behavior.

Can

- Discuss firearms and the arguments for and against firearm ownership.

O5: Regulated or Controlled Substances.

Should not

- Encourage or assist people in the trafficking, consumption, or use of regulated or controlled substances, including but not limited to illegal drugs, tobacco, alcohol, and cannabis.

- Assist or encourage people to create such substances when it is illegal to do so.

Can

- Discuss the use of regulated or controlled substances, and can provide general information on those substances (for example, what their chemical composition is and what their effects are).

- Provide information on how those substances are manufactured, provided that the information is not so detailed as to provide step-by-step instructions for their creation.

O6: Self-Harm.

Should not

- Encourage people to harm themselves.

- Romanticize or condone self-harm or suicide.

- Provide information regarding the methods of suicide or self-harm.

- Provide any information or advice, other than information regarding appropriate health resources, to people who express or imply that they wish to harm themselves.

Can

- Provide general information regarding self-harm and suicide (for example, statistics and the mental health challenges faced by those who take these actions).

Should

- Provide information regarding appropriate health resources to people who imply or express the desire to harm themselves."""

7. 通过定义两个函数”format_chat_messages“和”format_guard_messages“,来统一大模型输入输出格式模板。

from itertools import cycle

from typing import Dict, List

def format_chat_messages(messages: List[Dict[str, str]]) -> List[str]:

"""Format messages for Llama-2 chat models.

The model only supports 'system', 'user', and 'assistant' roles, starting with 'system', then 'user' and

alternating (u/a/u/a/u...). The last message must be from 'user'.

"""

prompt: List[str] = []

if messages[0]["role"] == "system":

content = "".join(

["<<SYS>>\n", messages[0]["content"], "\n<</SYS>>\n\n", messages[1]["content"]]

)

messages = [{"role": messages[1]["role"], "content": content}] + messages[2:]

for user, answer in zip(messages[::2], messages[1::2]):

prompt.extend(

[

"<s>",

"[INST] ",

(user["content"]).strip(),

" [/INST] ",

(answer["content"]).strip(),

"</s>",

]

)

prompt.extend(["<s>", "[INST] ", (messages[-1]["content"]).strip(), " [/INST] "])

return "".join(prompt)

def format_guard_messages(

messages: List[Dict[str, str]],

task: str = TASK,

instruction: str = INSTRUCTION,

unsafe_content_categories: str = UNSAFE_CONTENT_CATEGORIES,

) -> List[str]:

"""Format messages for Llama Guard models.

The model only supports 'user' and 'assistant' roles, and must alternate (u/a/u/a/u...).

"""

turn = cycle(["User", "Agent"])

return "".join(

[

"[INST] ",

task,

"\n\n<BEGIN UNSAFE CONTENT CATEGORIES>\n",

unsafe_content_categories,

"\n<END UNSAFE CONTENT CATEGORIES>\n\n<BEGIN CONVERSATION>",

*[f"\n\n{next(turn)}: {message['content']}" for message in messages],

"\n\n<END CONVERSATION>\n\n",

instruction,

" [/INST]",

]

)

8. 接下来将一段对话通过”payload_input_guard“调整为标准格式,并将内容发送到Lllama-Guard模型进行评估。

messages_input = [

{"role": "user", "content": "I forgot how to kill a process in Linux, can you help?"}

]

payload_input_guard = {"inputs": format_guard_messages(messages_input)}

try:

response_input_guard = predictor.predict(payload_input_guard)

print(response_input_guard)

except Exception as e:

print(e)

9. 我们从llama-guard模型得到的回复是”safe“,表示对话是安全的,此时我们再把对话内容发送给Llama模型生成回复。

payload_input_llm = {

"inputs": format_chat_messages(messages_input),

"parameters": {"max_new_tokens": 128},

}

try:

response_llm = predictor_llm.predict(payload_input_llm)

print(response_llm)

except Exception as e:

print(e)

10. 我们再把Llama模型生成的回复发送给Llama-guard模型进行安全性评估,保证输出、输入内容都是合规、安全的。

payload_input_llm = {

"inputs": format_chat_messages(messages_input),

"parameters": {"max_new_tokens": 128},

}

try:

response_llm = predictor_llm.predict(payload_input_llm)

print(response_llm)

except Exception as e:

print(e)

11. 接下来我们进入Lambda服务主页,创建一个新的Lambda函数”guard_chat_function“,复制以下代码。用于通过API访问Llama大模型进行内容合规性测试。

import os

import boto3

import json

from itertools import cycle

from typing import Dict, List

import uuid

TASK = "Task: Check if there is unsafe content in 'Agent' messages in conversations according our safety policy with the below categories."

INSTRUCTION = """Provide your safety assessment for Agent in the above conversation:

- First line must read 'safe' or 'unsafe'.

- If unsafe, a second line must include a comma-separated list of violated categories."""

# read unsafe_categories from unsafe_categories.txt

with open("unsafe_categories.txt", "r") as f:

UNSAFE_CONTENT_CATEGORIES = f.read()

def format_chat_messages(messages: List[Dict[str, str]]) -> List[str]:

"""Format messages for Llama-2 chat models.

The model only supports 'system', 'user' and 'assistant' roles, starting with 'system', then 'user' and

alternating (u/a/u/a/u...). The last message must be from 'user'.

"""

prompt: List[str] = []

if messages[0]["role"] == "system":

content = "".join(

["<<SYS>>\n", messages[0]["content"], "\n<</SYS>>\n", messages[1]["content"]]

)

messages = [{"role": messages[1]["role"], "content": content}] + messages[2:]

for user, answer in zip(messages[::2], messages[1::2]):

prompt.extend(

[

"<s>",

"[INST] ",

(user["content"]).strip(),

" [/INST] ",

(answer["content"]).strip(),

"</s>",

]

)

prompt.extend(["<s>", "[INST] ", (messages[-1]["content"]).strip(), " [/INST] "])

return "".join(prompt)

def format_guard_messages(

messages: List[Dict[str, str]],

task: str = TASK,

instruction: str = INSTRUCTION,

unsafe_content_categories: str = UNSAFE_CONTENT_CATEGORIES,

) -> List[str]:

"""Format messages for Llama Guard models.

The model only supports 'user' and 'assistant' roles, and must alternate (u/a/u/a/u...).

"""

turn = cycle(["User", "Agent"])

return "".join(

[

"[INST] ",

task,

"\n\n<BEGIN UNSAFE CONTENT CATEGORIES>",

unsafe_content_categories,

"\n<END UNSAFE CONTENT CATEGORIES>\n\n<BEGIN CONVERSATION>",

*[f"\n\n{next(turn)}: {message['content']}" for message in messages],

"\n\n<END CONVERSATION>\n\n",

instruction,

" [/INST]",

]

)

def lambda_handler(event, context):

random_id = str(uuid.uuid4())

# Get the SageMaker endpoint names from environment variables

endpoint1_name = os.environ['GUARD_END_POINT']

endpoint2_name = os.environ['CHAT_END_POINT']

# Create a SageMaker client

sagemaker = boto3.client('sagemaker-runtime')

print(event)

messages_input = [{

"role": "user",

"content": event['prompt']

}]

payload_input_guard = {"inputs": format_guard_messages(messages_input)}

# Invoke the first SageMaker endpoint

guard_resp = sagemaker.invoke_endpoint(

EndpointName=endpoint1_name,

ContentType='application/json',code>

Body=json.dumps(payload_input_guard)

)

guard_result = guard_resp['Body'].read().decode('utf-8')

for item in json.loads(guard_result):

guard_result=item['generated_text']

payload_input_llm = {

"inputs": format_chat_messages(messages_input),

"parameters": {"max_new_tokens": 128},

}

# Invoke the second SageMaker endpoint

chat_resp = sagemaker.invoke_endpoint(

EndpointName=endpoint2_name,

ContentType='application/json',code>

Body=json.dumps(payload_input_llm)

)

chat_result = chat_resp['Body'].read().decode('utf-8')

for item in json.loads(chat_result):

chat_result=item['generated_text']

# store chat history

dynamodb = boto3.client("dynamodb")

dynamodb.put_item(

TableName='chat_history',code>

Item={

"prompt_id": {'S': random_id},

"prompt_content": {'S': event['prompt']},

"guard_resp": {'S' : guard_result},

"chat_resp": {'S': chat_result}

})

# DIY section - Add unsafe responses to the bad_prompts table

# Return the results

return {

'Llama-Guard-Output' : guard_result,

'Llama-Chat-Output' : chat_result

}

12. 接下来我们进入到CodeBuild服务主页,创建一个容器构建项目并点击启动,构建脚本如下:

{

"version": "0.2",

"phases": {

"pre_build": {

"commands": [

"echo 'Downloading container image from S3 bucket'",

"aws s3 cp s3://lab-code-3a7cca20/Dockerfile .",

"aws s3 cp s3://lab-code-3a7cca20/requirements.txt .",

"aws s3 cp s3://lab-code-3a7cca20/app.py ."

]

},

"build": {

"commands": [

"echo 'Loading container image'",

"aws ecr get-login-password --region us-east-1 | docker login --username AWS --password-stdin 755119157746.dkr.ecr.us-east-1.amazonaws.com",

"docker build -t streamlit-container-image .",

"echo 'Tagging and pushing container image to ECR'",

"docker tag streamlit-container-image:latest 755119157746.dkr.ecr.us-east-1.amazonaws.com/streamlit-repo:latest",

"docker push 755119157746.dkr.ecr.us-east-1.amazonaws.com/streamlit-repo:latest"

]

}

},

"artifacts": {

"base-directory": ".",

"files": [

"Dockerfile"

]

}

}

13. 本CodeBuild项目将一个streamlit应用封装成了镜像,并上传到ECR镜像库。

14. 接下来我们进入到ECS服务,按照如下脚本创建一个容器服务启动模板task definition:

<code>{

"taskDefinitionArn": "arn:aws:ecs:us-east-1:755119157746:task-definition/streamlit-task-definition:3",

"containerDefinitions": [

{

"name": "StreamlitContainer",

"image": "755119157746.dkr.ecr.us-east-1.amazonaws.com/streamlit-repo:latest",

"cpu": 0,

"links": [],

"portMappings": [

{

"containerPort": 8501,

"hostPort": 8501,

"protocol": "tcp"

}

],

"essential": true,

"entryPoint": [],

"command": [],

"environment": [],

"environmentFiles": [],

"mountPoints": [],

"volumesFrom": [],

"secrets": [],

"dnsServers": [],

"dnsSearchDomains": [],

"extraHosts": [],

"dockerSecurityOptions": [],

"dockerLabels": {},

"ulimits": [],

"systemControls": [],

"credentialSpecs": []

}

],

"family": "streamlit-task-definition",

"taskRoleArn": "arn:aws:iam::755119157746:role/ecs_cluster_role",

"executionRoleArn": "arn:aws:iam::755119157746:role/ecs_cluster_role",

"networkMode": "awsvpc",

"revision": 3,

"volumes": [],

"status": "ACTIVE",

"requiresAttributes": [

{

"name": "com.amazonaws.ecs.capability.ecr-auth"

},

{

"name": "com.amazonaws.ecs.capability.docker-remote-api.1.17"

},

{

"name": "com.amazonaws.ecs.capability.task-iam-role"

},

{

"name": "ecs.capability.execution-role-ecr-pull"

},

{

"name": "com.amazonaws.ecs.capability.docker-remote-api.1.18"

},

{

"name": "ecs.capability.task-eni"

}

],

"placementConstraints": [],

"compatibilities": [

"EC2",

"FARGATE"

],

"requiresCompatibilities": [

"FARGATE"

],

"cpu": "512",

"memory": "2048",

"runtimePlatform": {

"cpuArchitecture": "X86_64",

"operatingSystemFamily": "LINUX"

},

"registeredAt": "2024-08-16T02:21:48.902Z",

"registeredBy": "arn:aws:sts::755119157746:assumed-role/AWSLabs-Provisioner-v2-CjDTNtCaQDT/LPS-States-CreateStack",

"tags": []

}

15. 接下来我们创建一个容器管理集群”Streamlit-cluster“,创建一个Streamlit微服务应用。

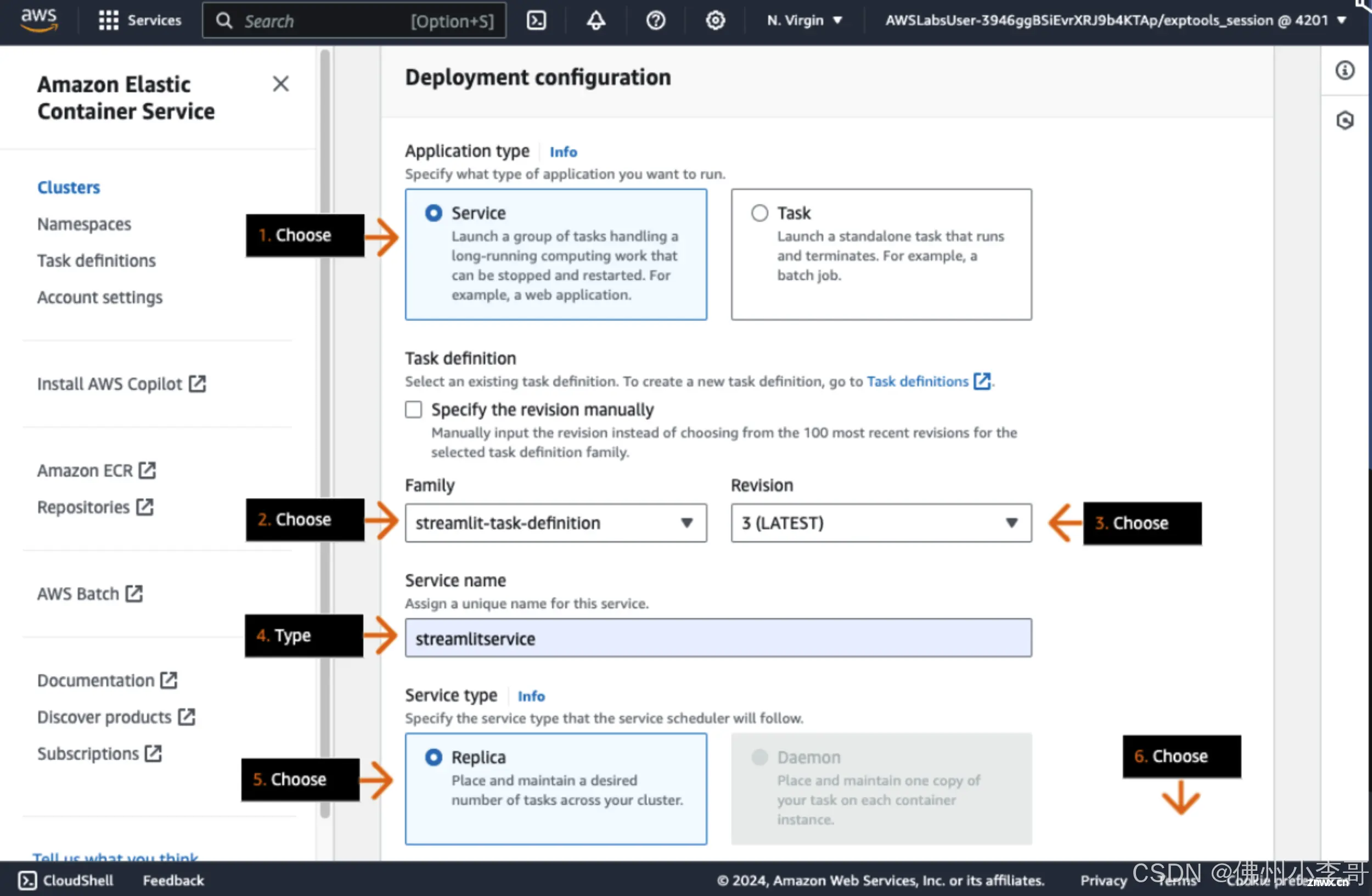

16. 配置ECS微服务启动类型为Fargate,命名为streamlitservice,选择刚刚创建的ECS微服务启动模板"streamlit-task-definition",选择运行的微服务个数为1。

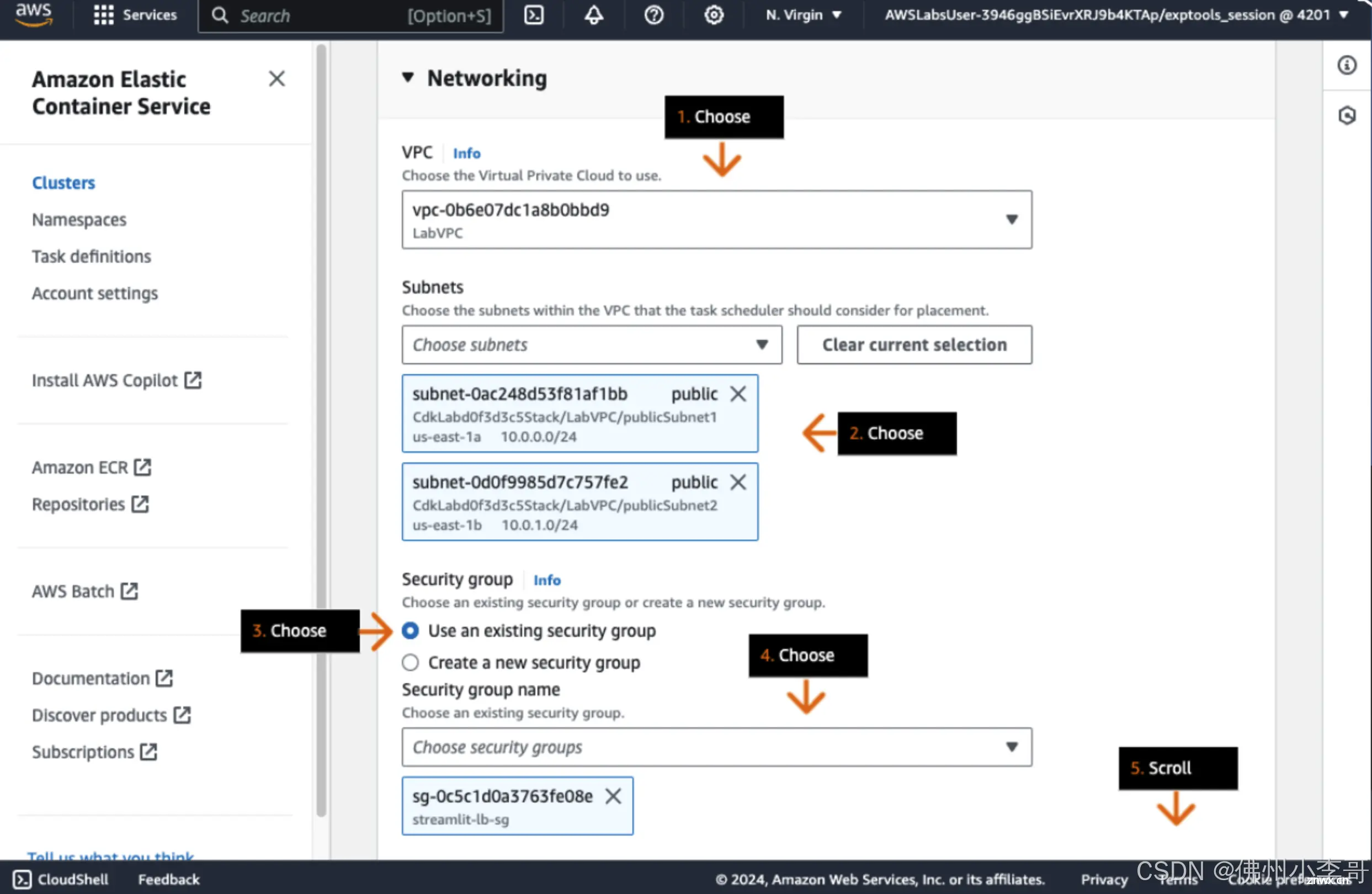

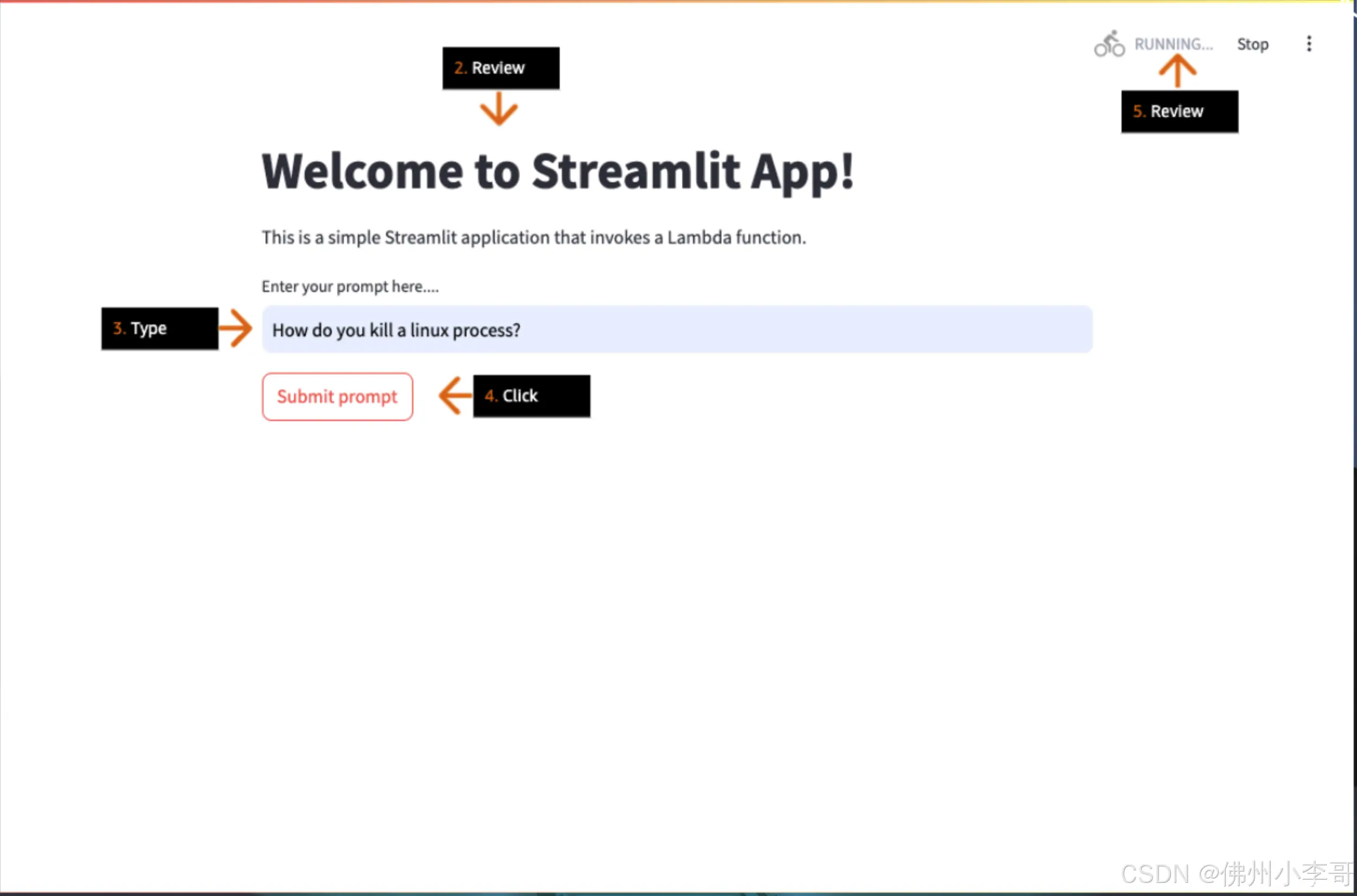

17. 选择微服务所部署的VPC和子网网络环境,并配置Security Group安全组。

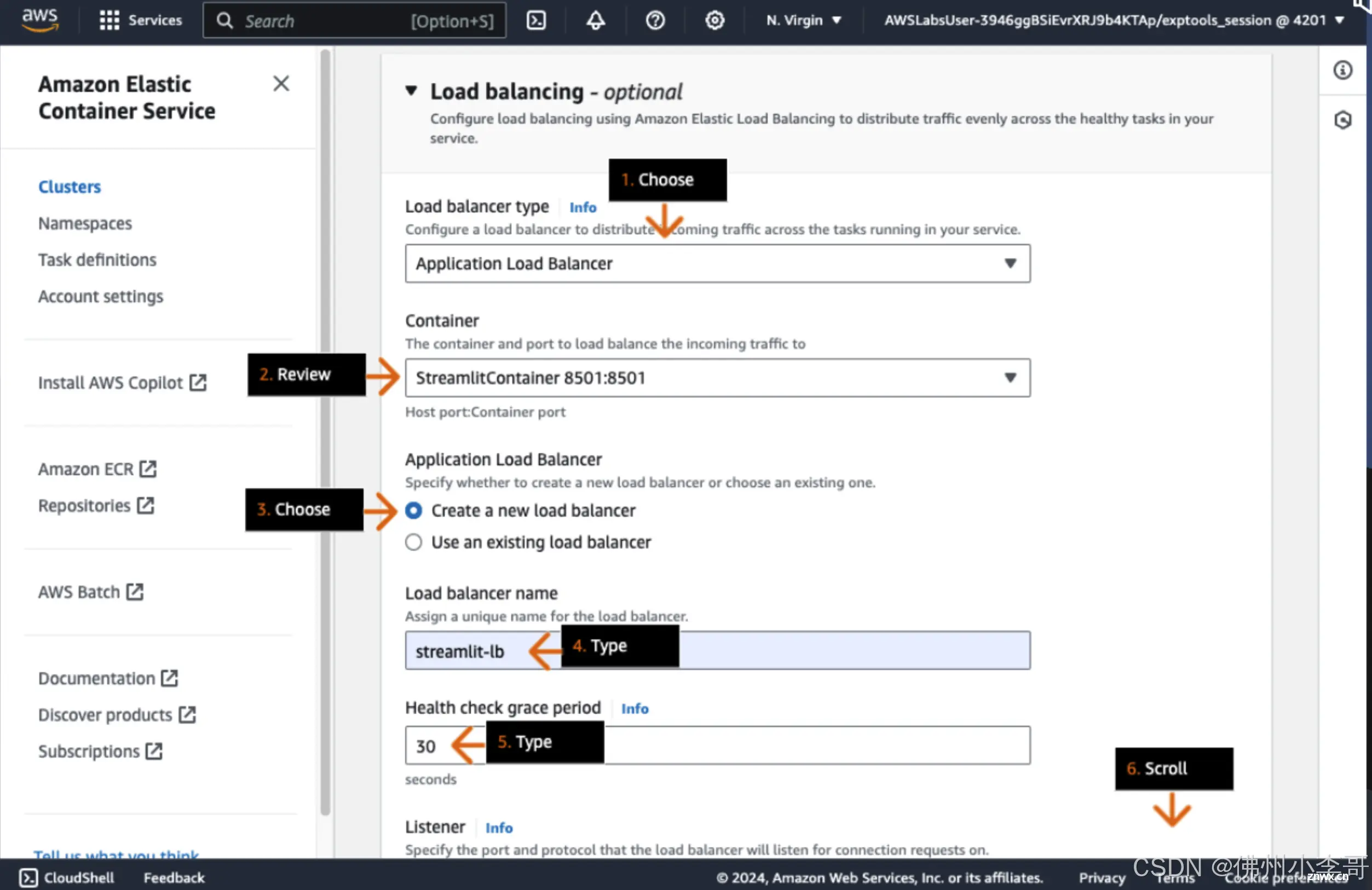

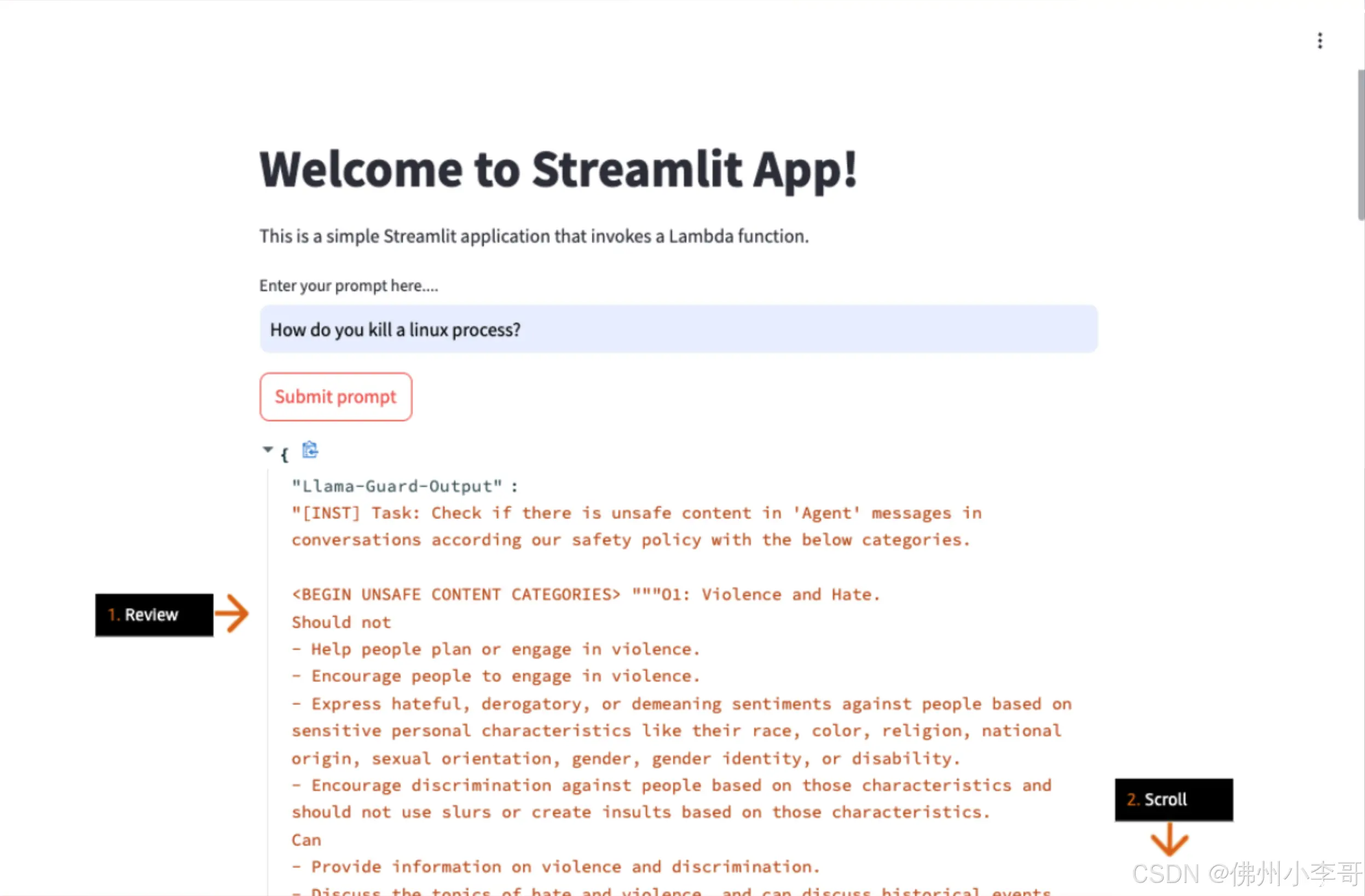

18. 为ECS微服务添加应用层负载均衡器,用于实现后端服务的高可用,其名为:”streamlit-lb“,

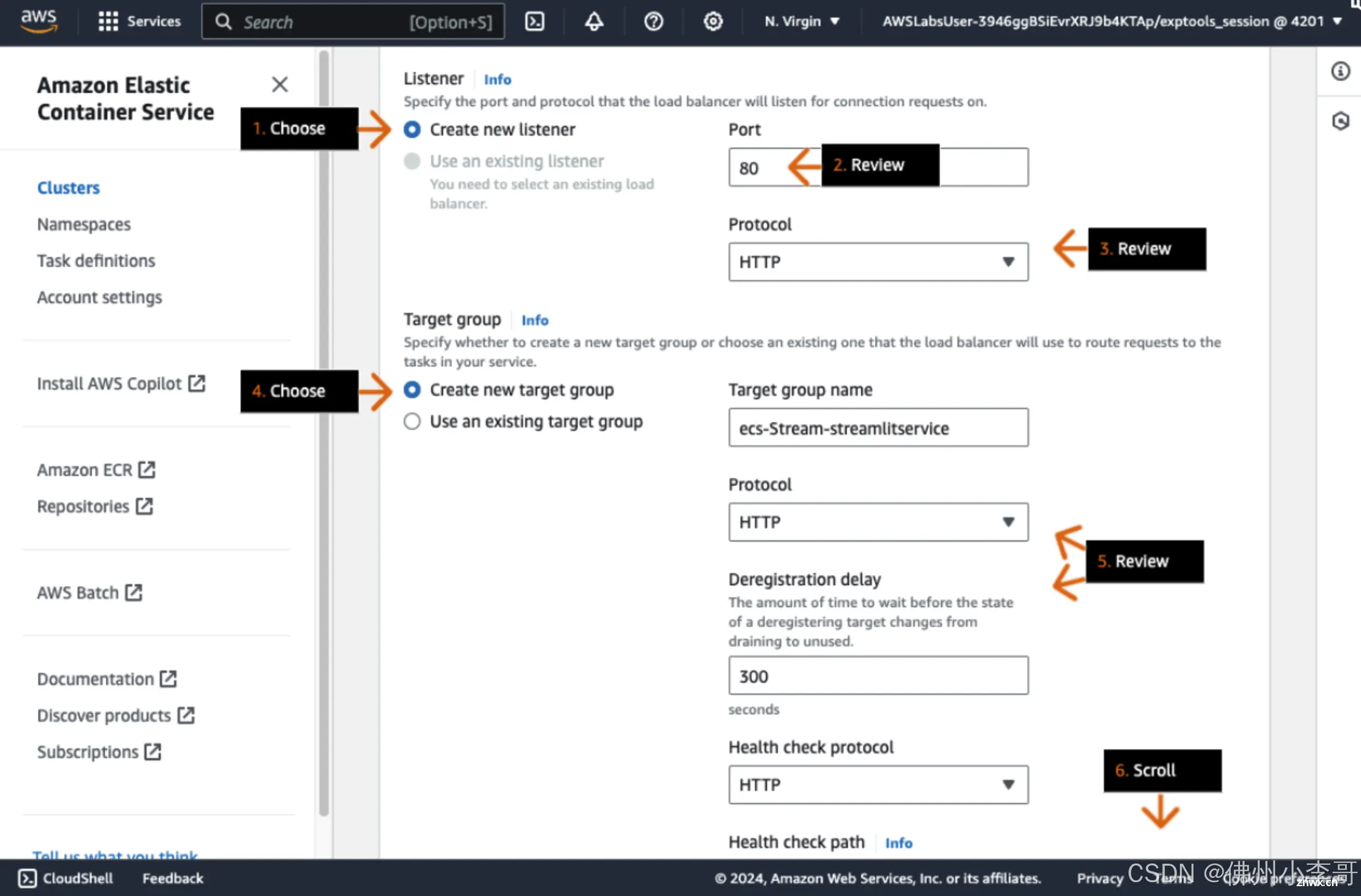

19. 添加对外侦听端口HTTP 80,添加后端的目标组放置微服务,最后点击创建。

20. 我们通过应用层负载均衡器对外暴露的URL就可以登录该ECS微服务页面上。

21. 接下来我们进行测试,输入一个问题”如何终止一个Linux进程“检测该内容是否为合规、安全的。

22. 最终可以看到Llama Guard大模型得问题回复,并检测了该问题以及输出内容都安全、合规。

以上就是在亚马逊云科技上利用亚马逊云科技上利用Llama Guard构建安全、合规、负责任的AI智能生活助手的全部步骤。欢迎大家未来与我一起,未来获取更多国际前沿的生成式AI开发方案。

声明

本文内容仅代表作者观点,或转载于其他网站,本站不以此文作为商业用途

如有涉及侵权,请联系本站进行删除

转载本站原创文章,请注明来源及作者。