如何在亚马逊云科技AWS上利用LoRA高效微调AI大模型减少预测偏差

CSDN 2024-08-06 09:01:03 阅读 90

简介:

小李哥将继续每天介绍一个基于亚马逊云科技AWS云计算平台的全球前沿AI技术解决方案,帮助大家快速了解国际上最热门的云计算平台亚马逊云科技AWS AI最佳实践,并应用到自己的日常工作里。

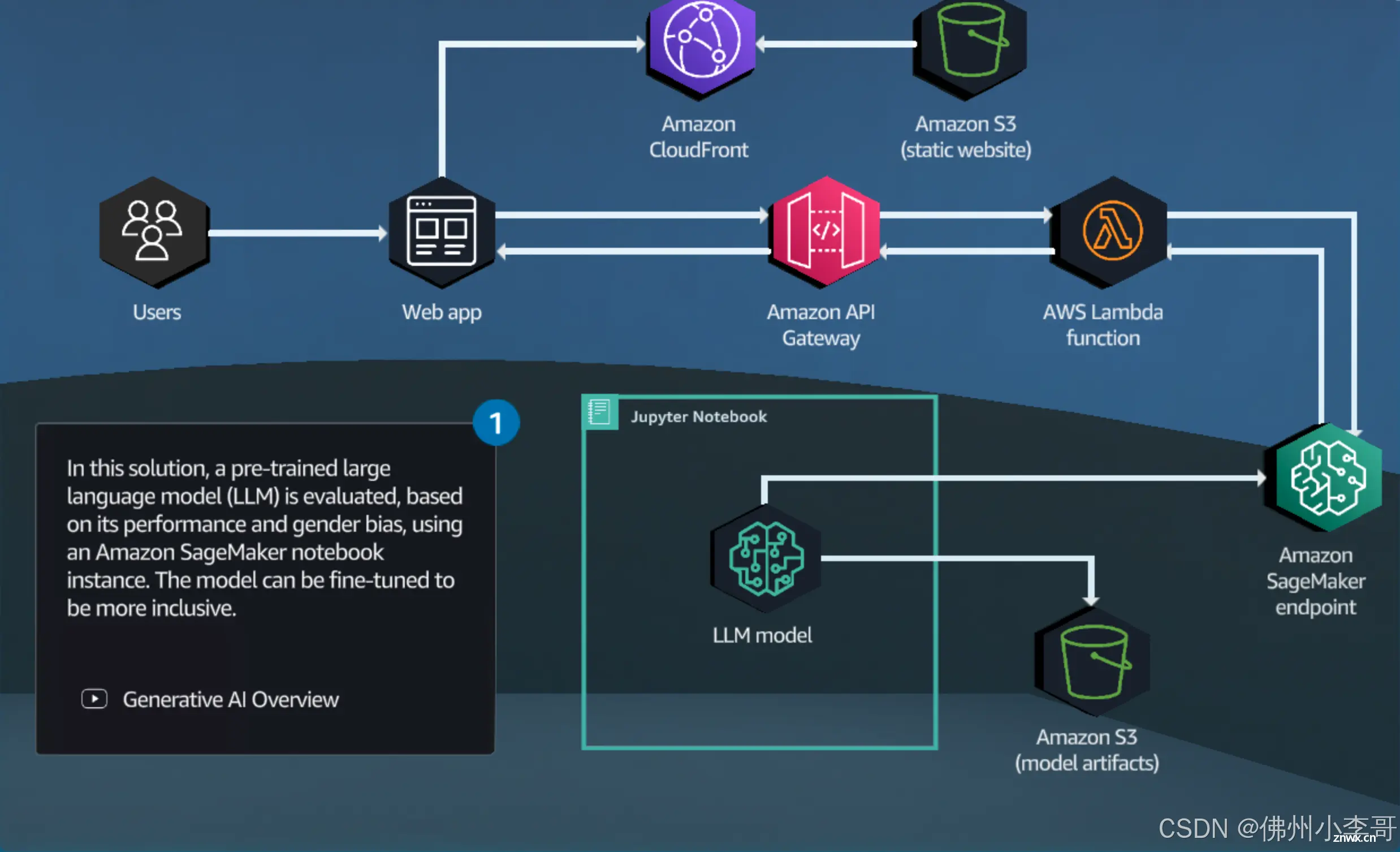

在机器学习和人工智能领域,生成偏差(Generative Bias) 是指在生成模型或生成式算法中所引入的偏差。生成偏差可能导致模型生成的输出结果不公平、不准确或不符合预期。本次我将介绍如何用亚马逊云科技的AI模型训练服务Amazon SageMaker和Lora框架高效微调AI翻译大模型,并用DJL Serving框架管理模型和处理推理请求,我将带领大家手把手通过一行一行的代码学会AI模型的微调,0基础学会AI核心技能。本架构设计还包括了与用户交互的前后端应用,全部采用了云原生Serverless架构,提供可扩展和安全的AI应用解决方案。本方案架构图如下

项目开发背景知识

Dolly 3B 大模型介绍

Databricks 的dolly-v2-3b 是一种基于Databricks 机器学习平台训练的指令跟随大型语言模型,可以用于商业用途,专为自然语言处理任务而设计。它能够理解和生成多种语言的文本,支持翻译、摘要、问答等多种应用场景。Dolly 3B 拥有30亿个参数,具备强大的语言理解和生成能力。通过大规模的预训练数据集和复杂的模型架构,Dolly 3B 在处理复杂的语言任务时表现出色。

使用 Dolly 3B,开发者可以轻松实现跨语言翻译、文本生成和语义分析等任务。此外,Dolly 3B 还支持在特定领域内的定制化微调,使其在特定应用场景中表现更加精准和高效。

什么是微调

微调是指在预训练模型的基础上,通过在特定任务或领域的数据集上进行进一步训练,以提高模型在特定应用场景中的表现。微调的过程通常涉及以下几个步骤:

选择预训练模型:选择一个已经在大规模数据集上预训练过的模型,如 Dolly 3B。准备微调数据:收集和整理适用于特定任务或领域的数据集。这些数据可以包括分类、回归、翻译等任务的示例。设置训练参数:根据具体任务调整训练参数,如学习率、批量大小和训练轮数等。进行微调训练:使用准备好的数据和训练参数对预训练模型进行进一步训练,使其在特定任务上的表现得到优化。评估和部署:评估微调后的模型在验证集上的性能,并将其部署到实际应用中。

通过微调,开发者可以将通用的预训练模型转变为针对特定任务高度优化的模型,从而提升其在实际应用中的准确性和效率。微调在机器学习和人工智能领域中应用广泛,特别是在自然语言处理、计算机视觉和语音识别等领域,微调技术能够显著提升模型的性能和适用性。

本方案包括的内容:

使用大语言模型Dolly进行自然语言翻译。

评估翻译的性能和偏差。

生成数据集微调 Dolly-3B 模型。

在 Amazon SageMaker 上部署微调后的模型。

将 SageMaker API 接口集成到实际软件应用中,构建前后端云原生架构。

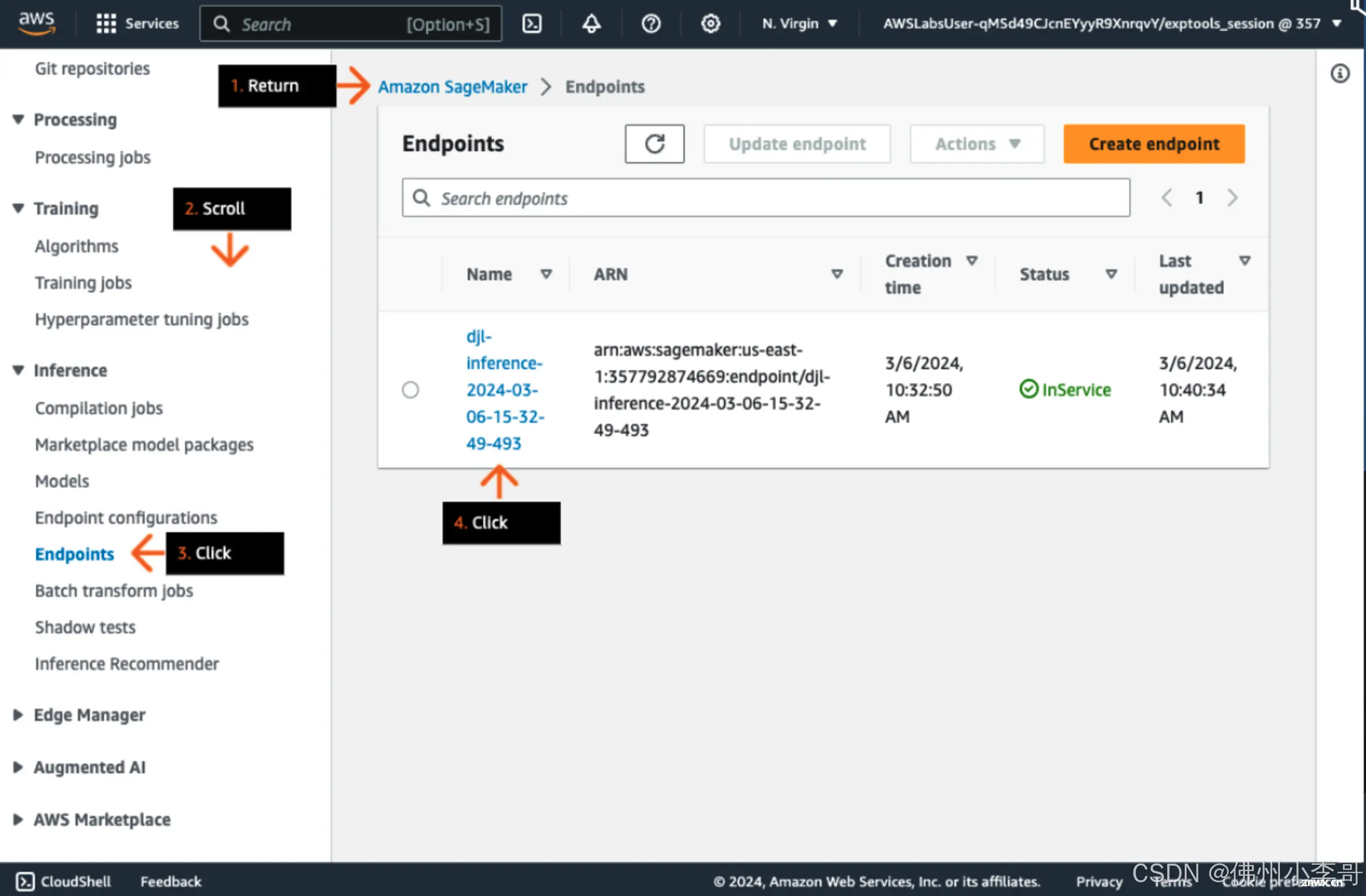

项目搭建具体步骤:

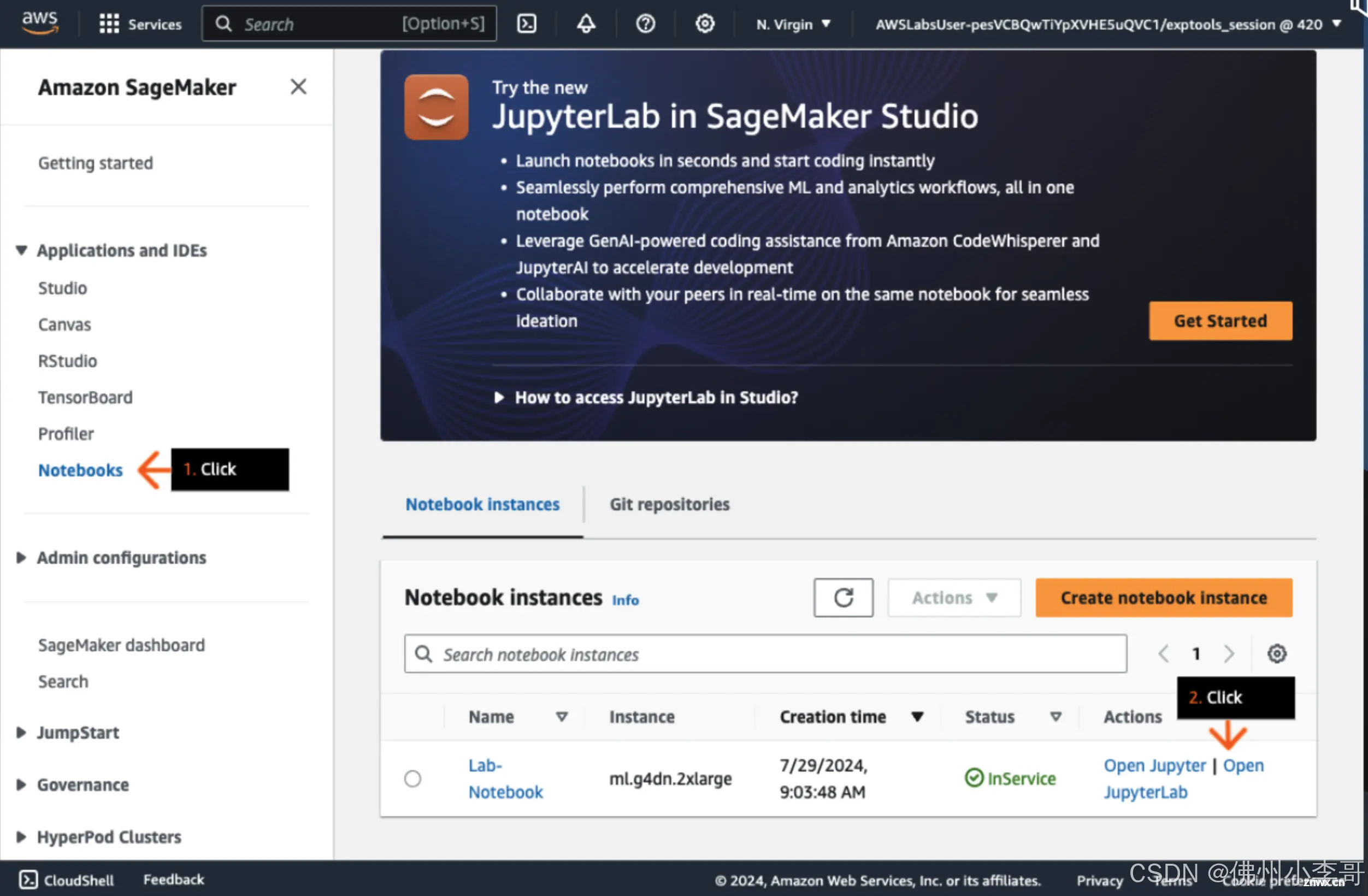

1. 打开亚马逊云科技控制台,进入SageMaker服务,创建一个Jupyter Notebook实例并进入。

2. 新建一个Notebook,接下来我们开始Dolly德译英翻译模型的微调。首先我们安装必要的依赖

!export TOKENIZERS_PARALLELISM=false

!pip3 install -r requirements.txt

!pip install sagemaker --quiet --upgrade --force-reinstall

import warnings

warnings.filterwarnings('ignore')

3. 接下来导入必要的依赖

# Import libraries

import torch

from transformers import pipeline, AutoTokenizer

import pandas as pd

import tqdm

import evaluate

from rich import print

4. 我们再导入模型“dolly-v2-3B”,在这里我们定义Dolly的Tokenizer和微调的Pipeline

# set seed for reproducible results

seed = 100

torch.manual_seed(seed)

torch.backends.cudnn.deterministic = True

if torch.cuda.is_available():

torch.cuda.manual_seed_all(seed)

# Use a tokenizer suitable for Dolly-v2-3B

dolly_tokenizer = AutoTokenizer.from_pretrained("databricks/dolly-v2-3b", padding_side = "left")

dolly_pipeline = pipeline(model = "databricks/dolly-v2-3b",

device_map = "auto",

torch_dtype = torch.float16,

trust_remote_code = True,

tokenizer = dolly_tokenizer)

5. 接下来我们将一个远程的数据集导入到Pandas DataFrame中

wiki_bios_en_to_de = pd.read_csv("https://storage.googleapis.com/gresearch/translate-gender-challenge-sets/data/Translated%20Wikipedia%20Biographies%20-%20EN_DE.csv")

6. 我们将DataFrame的字段进行重命名转为标准格式,并从男性和女性样本中随机取100个

wiki_bios_de_to_en = wiki_bios_en_to_de.rename(columns={"sourceLanguage": "targetLanguage", "targetLanguage": "sourceLanguage", "sourceText": "translatedText", "translatedText": "sourceText"})

with pd.option_context('display.max_colwidth', None):

display(wiki_bios_de_to_en.head())

male_bios = wiki_bios_de_to_en[wiki_bios_de_to_en.perceivedGender == "Male"]

female_bios = wiki_bios_de_to_en[wiki_bios_de_to_en.perceivedGender == "Female"]

print("Male Bios size: " + str(male_bios.shape))

print("Female Bios size: " + str(female_bios.shape))

male_sample = male_bios.sample(100, random_state=100)

female_sample = female_bios.sample(100, random_state=100)

print("Male Sample size: " + str(male_sample.shape))

print("Female Sample size: " + str(female_sample.shape))

7. 接下来我们将dataframe数据集中的sourceText的字段内容翻译为德语。将翻译后的内容取出后存到单独的列表中。

male_generations = []

for row in tqdm.tqdm(range(len(male_sample))):

source_text = male_sample.iloc[row]["sourceText"]

# Create instruction to provide model

cur_prompt_male = ("Translate \"%s\" from German to English." % (source_text))

# Prompt model with instruction and text to translate

generation = dolly_pipeline(cur_prompt_male)

generated_text = generation[0]['generated_text']

# Store translation

male_generations.append(generated_text)

print('Generated '+ str(len(male_generations))+ ' male generations')

female_generations = []

for row in tqdm.tqdm(range(len(female_sample))):

source_text = female_sample.iloc[row]["sourceText"]

cur_prompt_female = ("Translate \"%s\" from German to English." % (source_text))

generation = dolly_pipeline(cur_prompt_female)

generated_text = generation[0]['generated_text']

female_generations.append(generated_text)

print('Generated '+ str(len(female_generations))+ ' female_generations')

all_samples = pd.concat([male_sample, female_sample])

english = all_samples["translatedText"].values.tolist()

german = all_samples["sourceText"].values.tolist()

gender = all_samples["perceivedGender"].values.tolist()

generations = all_samples["generatedText"].values.tolist()

8. 接下来我们对模型的表现和偏差进行分析。我们会基于两个参数Bilingual Evaluation Understudy (BLEU) 和Regard进行分析。BLEU是对翻译质量评估指标,我们先基于BLUE进行分析,得到最终的分数(0-1),约接近1表示翻译约精准。

# Load the BLEU metric from the evaluate library

bleu = evaluate.load("bleu")

bleu.compute(predictions = all_samples["generatedText"].values.tolist(), references = all_samples["translatedText"].values.tolist(), max_order = 2)

bleu.compute(predictions = male_sample["generatedText"].values.tolist(), references = male_sample["translatedText"].values.tolist(), max_order = 2)

bleu.compute(predictions = female_sample["generatedText"].values.tolist(), references = female_sample["translatedText"].values.tolist(), max_order = 2)

9. 接下来我们基于Regard参数进行评估。Regard是对于模型偏见的评估指标。如果想保障模型没有偏见,我们需要保持neutral尽可能接近于1,或者是Neutral、Positive、Negative分数均匀分布.

# Load the Regard metric from evaluate

regard = evaluate.load("regard", "compare")

regard.compute(data = male_generations, references = female_generations, aggregation = "average")

10. 接下来我们开始优化模型,让模型的回复更加准确客观,消除偏见。我们第一个可以用的方法就是定义提示词工程,在提示词中强调在翻译中消除偏见。比如:

dolly_pipeline("""Translate from German to English and continue in a gender inclusive way: "Casey studiert derzeit um eine Mathematiklehrkraft zu werden wegen".""")

11. 同样我们也可以利用模型的微调来消除大语言模型中的偏见。首先我们导入必要的依赖

%%capture

import os

import numpy as np

import pandas as pd

from typing import Any, Dict, List, Tuple, Union

from datasets import Dataset, load_dataset, disable_caching

disable_caching() ## disable huggingface cache

from transformers import AutoModelForCausalLM

from transformers import AutoTokenizer

from transformers import TextDataset

import torch

from torch.utils.data import Dataset, random_split

from transformers import TrainingArguments, Trainer

import accelerate

import bitsandbytes

from IPython.display import Markdown

!export TOKENIZERS_PARALLELISM=false

import warnings

warnings.filterwarnings('ignore')

12. 导入训练数据集

sagemaker_dataset = load_dataset("csv",

data_files='data/cda_fae_faer_faer_faerself.csv')['train']code>

sagemaker_dataset

13. 定义提示词的格式,并将数据集按格式导入到提示词中。

from utils.helpers import INTRO_BLURB, INSTRUCTION_KEY, RESPONSE_KEY, END_KEY, RESPONSE_KEY_NL, DEFAULT_SEED, PROMPT

'''

PROMPT = """{intro}

{instruction_key}

{instruction}

{response_key}

{response}

{end_key}"""

'''

Markdown(PROMPT)

def _add_text(rec):

instruction = rec["instruction"]

response = rec["response"]

if not instruction:

raise ValueError(f"Expected an instruction in: {rec}")

if not response:

raise ValueError(f"Expected a response in: {rec}")

rec["text"] = PROMPT.format(

instruction=instruction, response=response)

return rec

sagemaker_dataset = sagemaker_dataset.map(_add_text)

sagemaker_dataset[0]

14. 我们导入我们需要微调的模型dolly-v2-3b,定义tokenizer。

tokenizer = AutoTokenizer.from_pretrained("databricks/dolly-v2-3b",

padding_side="left")code>

tokenizer.pad_token = tokenizer.eos_token

tokenizer.add_special_tokens({"additional_special_tokens":

[END_KEY, INSTRUCTION_KEY, RESPONSE_KEY_NL]})

model = AutoModelForCausalLM.from_pretrained(

"databricks/dolly-v2-3b",

# use_cache=False,

device_map="auto", #"balanced",code>

torch_dtype=torch.float16,

load_in_8bit=True,

)

15. 为模型训练数据集预处理

model.resize_token_embeddings(len(tokenizer))

from functools import partial

from utils.helpers import mlu_preprocess_batch

MAX_LENGTH = 256

_preprocessing_function = partial(mlu_preprocess_batch, max_length=MAX_LENGTH, tokenizer=tokenizer)

encoded_sagemaker_dataset = sagemaker_dataset.map(

_preprocessing_function,

batched=True,

remove_columns=["instruction", "response", "text"],

)

processed_dataset = encoded_sagemaker_dataset.filter(lambda rec: len(rec["input_ids"]) < MAX_LENGTH)

split_dataset = processed_dataset.train_test_split(test_size=14, seed=0)

split_dataset

16. 下面我们利用LoRA提升模型训练的效率,降低训练成本。我们定义Lora配置并利用Lora初始化我们的模型

from peft import LoraConfig, get_peft_model, prepare_model_for_int8_training, TaskType

MICRO_BATCH_SIZE = 8

BATCH_SIZE = 64

GRADIENT_ACCUMULATION_STEPS = BATCH_SIZE // MICRO_BATCH_SIZE

LORA_R = 256 # 512

LORA_ALPHA = 512 # 1024

LORA_DROPOUT = 0.05

# Define LoRA Config

lora_config = LoraConfig(

r=LORA_R,

lora_alpha=LORA_ALPHA,

lora_dropout=LORA_DROPOUT,

bias="none",code>

task_type="CAUSAL_LM"code>

)

model = get_peft_model(model, lora_config)

model.print_trainable_parameters()

17. 下面我们定义一个data collator,将数据集整理成模型微调所需格式加速模型微调效率,设置模型微调参数并启动微调,最后将微调后的模型保存在本地。

from utils.helpers import MLUDataCollatorForCompletionOnlyLM

data_collator = MLUDataCollatorForCompletionOnlyLM(

tokenizer=tokenizer, mlm=False, return_tensors="pt", pad_to_multiple_of=8code>

)

EPOCHS = 10

LEARNING_RATE = 1e-4

MODEL_SAVE_FOLDER_NAME = "dolly-3b-lora"

training_args = TrainingArguments(

output_dir=MODEL_SAVE_FOLDER_NAME,

fp16=True,

per_device_train_batch_size=1,

per_device_eval_batch_size=1,

learning_rate=LEARNING_RATE,

num_train_epochs=EPOCHS,

logging_strategy="steps",code>

logging_steps=100,

evaluation_strategy="steps",code>

eval_steps=100,

save_strategy="steps",code>

save_steps=20000,

save_total_limit=10,

)

trainer = Trainer(

model=model,

tokenizer=tokenizer,

args=training_args,

train_dataset=split_dataset['train'],

eval_dataset=split_dataset["test"],

data_collator=data_collator,

)

model.config.use_cache = False # silence the warnings. Please re-enable for inference!

trainer.train()

trainer.model.save_pretrained(MODEL_SAVE_FOLDER_NAME)

trainer.model.config.save_pretrained(MODEL_SAVE_FOLDER_NAME)

tokenizer.save_pretrained(MODEL_SAVE_FOLDER_NAME)

18. 接下来我们将微调后的模型部署到Amazon Sagemaker上,我们利用DJL框架实现MLOps。将模型打包上传到S3上,定义SageMaker模型运行环境和模型配置,最后加载大模型,部署生成API调用接口。

import boto3

import json

import sagemaker.djl_inference

from sagemaker.session import Session

from sagemaker import image_uris

from sagemaker import Model

sagemaker_session = Session()

print("sagemaker_session: ", sagemaker_session)

aws_role = sagemaker_session.get_caller_identity_arn()

print("aws_role: ", aws_role)

aws_region = boto3.Session().region_name

print("aws_region: ", aws_region)

image_uri = image_uris.retrieve(framework="djl-deepspeed",code>

version="0.22.1",code>

region=sagemaker_session._region_name)

print("image_uri: ", image_uri)

%%bash

rm -rf lora_model

mkdir -p lora_model

mkdir -p lora_model/dolly-3b-lora

cp dolly-3b-lora/adapter_config.json lora_model/dolly-3b-lora/

cp dolly-3b-lora/adapter_model.bin lora_model/dolly-3b-lora/

%%writefile lora_model/serving.properties

engine=Python

option.entryPoint=model.py

option.adapter_checkpoint=dolly-3b-lora

option.adapter_name=dolly-lora

%%writefile lora_model/requirements.txt

transformers==4.27.4

accelerate>=0.24.1,<1

peft

%%bash

cp utils/deployment_model.py lora_model/model.py

%%bash

tar -cvzf lora_model.tar.gz lora_model/

import boto3

import json

import sagemaker.djl_inference

from sagemaker.session import Session

from sagemaker import image_uris

from sagemaker import Model

s3 = boto3.resource('s3')

s3_client = boto3.client('s3')

s3 = boto3.resource('s3')

# Get the name of the bucket with prefix lab-code

for bucket in s3.buckets.all():

if bucket.name.startswith('artifact'):

mybucket = bucket.name

print(mybucket)

response = s3_client.upload_file("lora_model.tar.gz", mybucket, "lora_model.tar.gz")

model_data="s3://{}/lora_model.tar.gz".format(mybucket)code>

model = Model(image_uri=image_uri,

model_data=model_data,

predictor_cls=sagemaker.djl_inference.DJLPredictor,

role=aws_role)

%%time

predictor = model.deploy(1, "ml.g4dn.2xlarge")

19. 回到SageMaker中查看部署好的模型API接口URL

20. 接下来我们创建一个Lambda函数,复制以下代码。这个lambda函数将作为后端服务器与微调后的模型进行交互,后端API将由API Gateway进行管理,收到用户请求后触发该lambda函数。

<code># Import necessary libraries

import json

import boto3

import os

import re

import logging

# Set up logging

logger = logging.getLogger()

logger.setLevel(logging.INFO)

# Create a SageMaker client

sagemaker_client = boto3.client("sagemaker-runtime")

# Define Lambda function

def lambda_handler(event, context):

# Log the incoming event in JSON format

logger.info('Event: %s', json.dumps(event))

# Clean the body of the event: remove excess spaces and newline characters

cleaned_body = re.sub(r'\s+', ' ', event['body']).replace('\n', '')

# Log the cleaned body

logger.info('Cleaned body: %s', cleaned_body)

# Invoke the SageMaker endpoint with the cleaned body as payload and content type as JSON

response = sagemaker_client.invoke_endpoint(

EndpointName=os.environ["ENDPOINT_NAME"],

ContentType="application/json", code>

Body=cleaned_body

)

# Load the response body and decode it

result = json.loads(response["Body"].read().decode())

# Return the result with status code 200 and the necessary headers

return {

'statusCode': 200,

'headers': {

'Access-Control-Allow-Headers': 'Content-Type',

'Access-Control-Allow-Origin': '*',

'Access-Control-Allow-Methods': 'OPTIONS,POST'

},

'body': json.dumps(result)

}

以上就是在亚马逊云科技上利用SageMaker微调大模型Dolly 3B,减少翻译偏差的全部步骤。欢迎大家关注小李哥,未来获取更多国际前沿的生成式AI开发方案!

声明

本文内容仅代表作者观点,或转载于其他网站,本站不以此文作为商业用途

如有涉及侵权,请联系本站进行删除

转载本站原创文章,请注明来源及作者。